Photo by the editor

# Entry

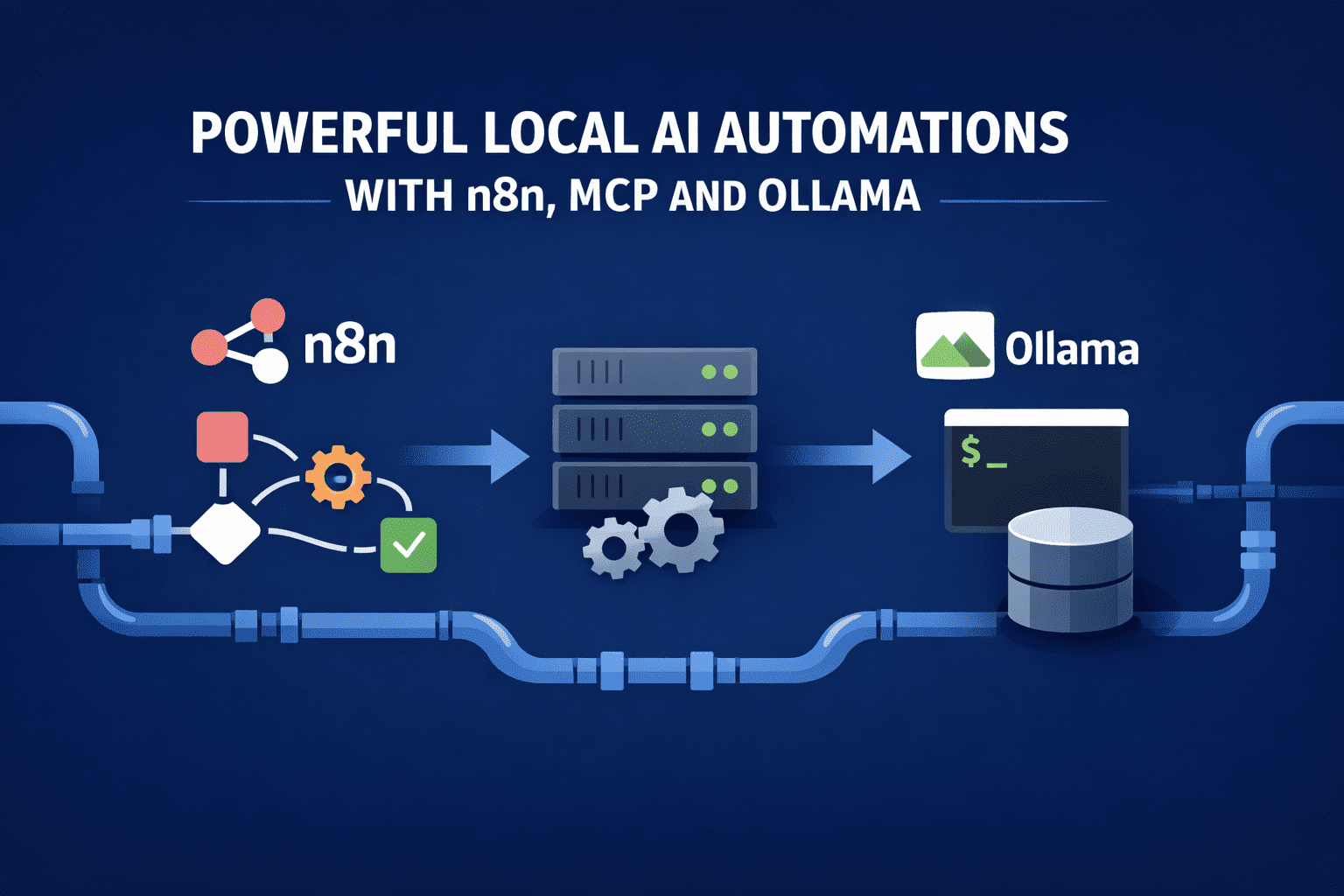

Running gigantic language models (LLM) locally only matters if they do the real work. Value n8n, Model context protocol (MCP) i To be is not architectural elegance, but the ability to automate tasks that would otherwise require the work of engineers.

This stack works when each component has a specific responsibility: n8n coordinates, MCP limits tool usage, and Ollama decides on local data.

The ultimate goal is to run these automations on a single workstation or petite server, replacing fine scripts and high-priced API-based systems.

# Automated log triage with root cause hypothesis generation

This automation starts with n8n sourcing application logs every five minutes from a local directory or Kafka consumer. n8n performs deterministic pre-processing: grouping by services, deduplication of repeated stack traces, and extraction of timestamps and error codes. Only the condensed bundle of logs is given to Ollama.

The local model receives a narrow-scope prompt asking it to pinpoint a cluster failure, identify the first causal event, and generate two to three likely root cause hypotheses. MCP provides one tool: query_recent_deployments. When the model requests it, n8n queries the deployment database and returns the result. The model then updates its hypotheses and produces JSON output.

n8n stores the output, posts a summary to an internal Slack channel, and only opens a ticket if the confidence exceeds a certain threshold. No LLM cloud is involved and the model never sees raw logs without preprocessing.

# Continuous data quality monitoring for analytical pipelines

n8n observes incoming batch tables in local storage and performs schema comparisons against historical baselines. When a drift is detected, the workflow sends Ollama a concise description of the change rather than the full data set.

The model is intended to determine whether the drift is benign, suspicious, or refractory. MCP provides two tools: sample_rows AND compute_column_stats. The model selectively requests these tools, checks the return values, and produces a classification with a human-readable explanation.

If a drift is classified as an interrupt, n8n automatically pauses pipeline by pipeline and describes the event along with model reasoning. Over time, teams accumulate a searchable archive of past pattern changes and decisions, all generated locally.

# Autonomous dataset labeling and validation loops for machine learning pipelines

This automation is intended for teams training models on constantly incoming data, where manual labeling becomes a bottleneck. n8n monitors the local data drop location or database table and batches fresh, untagged records at set intervals.

Each batch is pre-processed deterministically to remove duplicates, normalize fields, and include a minimum amount of metadata before inference occurs.

Ollama only receives the cleaned batch and is instructed to generate labels with trust scores, not arbitrary text. MCP provides a narrow set of tools so that the model can validate its own results against historical distributions and sampling checks before anything is accepted. n8n then decides whether the labels are approved automatically, partially, or directed to humans.

Key elements of the loop:

- Initial label generation: The local model assigns labels and confidence values based solely on the provided schema and examples, creating structured JSON that n8n can check without interpretation.

- Statistical drift verification: Using MCP, the model requests label distribution statistics from previous batches and signals deviations that suggest concept drift or misclassification.

- Low confidence escalation: n8n automatically routes samples below the confidence threshold to reviewers, accepting the rest, maintaining high throughput without sacrificing accuracy.

- Feedback reinjection: Human corrections are fed back into the system as fresh reference examples that the model can retrieve in future passes through the MCP.

This creates a closed-loop labeling system that scales locally, improves over time, and removes people from the critical path unless they are truly needed.

# Self-updating research reports from internal and external sources

This automation operates on a nightly schedule. n8n pulls fresh commits from selected repositories, latest internal documents and a selected set of saved articles. Each element is divided into pieces and embedded locally.

to be, regardless of whether it is run via terminal or GUIyou will be prompted to update an existing study outline instead of creating a fresh one. MCP provides search tools that allow the model to inspect previous summaries and embeddings. The model identifies what has changed, rewrites only the sections that are affected, and flags contradictions or old-fashioned claims.

n8n pushes the updated brief back to the repository and records the difference. The result is a living document that evolves without manual rewriting, driven entirely by local insights.

# Automated autopsies with evidence linkage

Once an incident is closed, n8n creates timelines based on alerts, logs, and deployment events. Instead of asking the model to write a narrative blindly, the workflow divides the timeline into strictly chronological blocks.

The model is instructed to produce an autopsy with clear references to events in the timeline. MCP exposes A fetch_event_details tool that the model can invoke when context is missing. Each paragraph of the final report refers to specific evidence.

n8n discards any results that are missing quotes and prompts for the model again. The final document is consistent, auditable and generated without disclosing operational data externally.

# Automate local review of contracts and policies

Legal and compliance teams run this automation on internal computers. n8n downloads fresh contract drafts and policy updates, removes formatting and segment clauses.

Ollama is asked to compare each clause with the approved benchmark and report any deviations. MCP reveals a retrieve_standard_clause toolallowing the model to utilize canonical language. The output includes true clause references, risk level and suggested fixes.

n8n forwards high-risk findings to reviewers and automatically approves unchanged sections. Sensitive documents never leave the local environment.

# Code review using tools for internal repositories

This workflow is triggered on pull requests. n8n extracts the diffs and test results and then sends them to Ollam with instructions to focus only on logical changes and potential failure modes.

With MCP, the model can make calls run_static_analysis AND query_test_failures. He uses these results to justify his review comments. n8n only posts inline comments when the model identifies specific, repeatable issues.

The result is a code reviewer who is not hallucinated by style opinions and only comments when the evidence supports that claim.

# Final thoughts

Each example limits the scope of the model, provides only the necessary tools, and relies on n8n for enforcement. Local inference makes these workflows quick enough to run continuously and economical enough to always run. More importantly, it keeps reasoning close to the data and execution under tight control – where it belongs.

This is where n8n, MCP, and Ollama stop being infrastructure experiments – and start functioning as a practical automation stack.

Nahla Davies is a programmer and technical writer. Before devoting herself full-time to technical writing, she managed, among other intriguing things, to serve as lead programmer for a 5,000-person experiential branding organization whose clients include: Samsung, Time Warner, Netflix and Sony.