Photo by the editor

# Entry

ChatGPT, Claude, Gemini. You know these names. But here’s the question: What if you ran your own model instead? Sounds ambitious. This is not. You can implement a working one large language model (LLM) in less than 10 minutes without spending a dollar.

This article breaks it down. First, let’s figure out what you actually need. Then we’ll look at the actual costs. Finally, we will implement TinyLlama on Hugging Face for free.

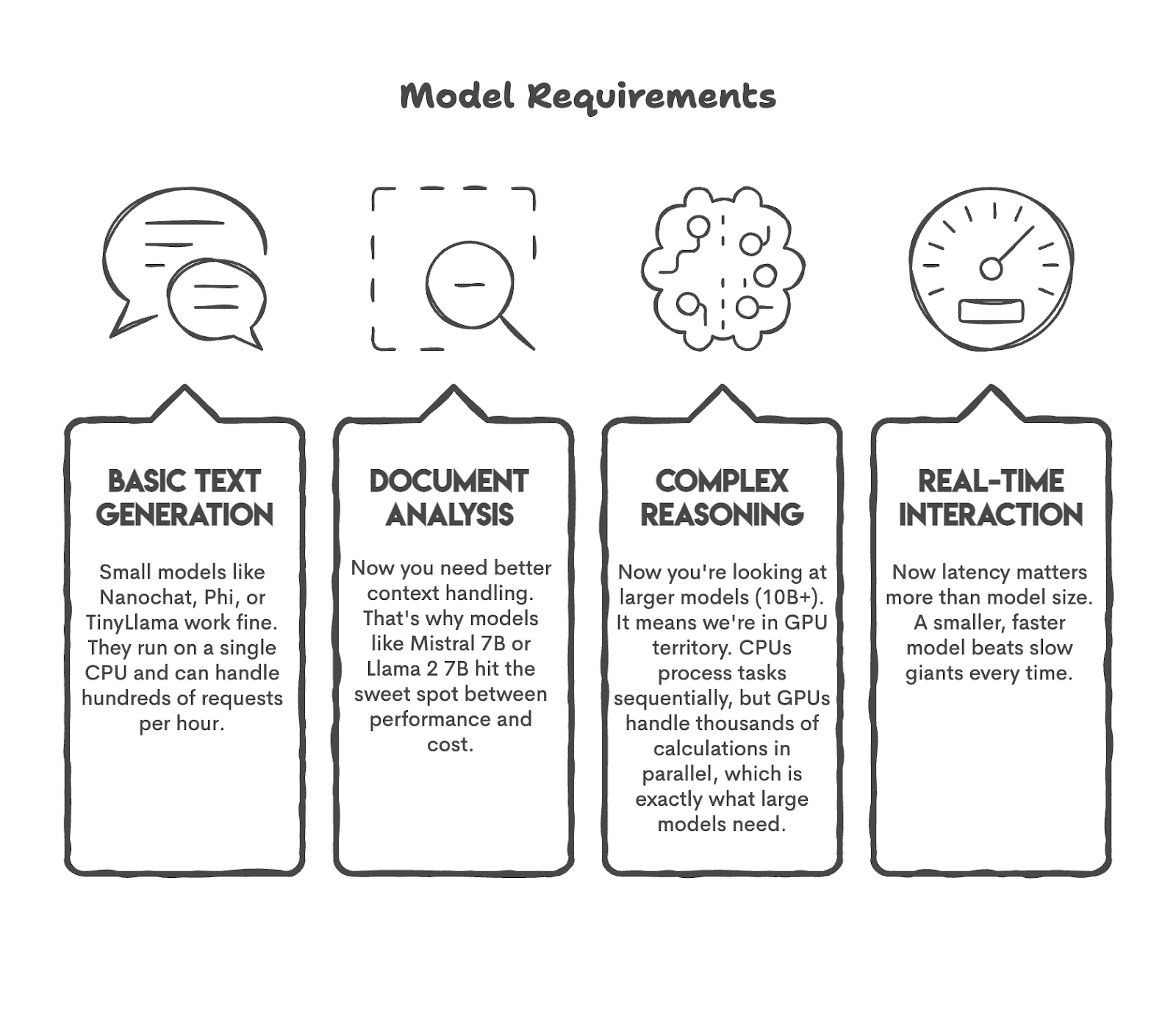

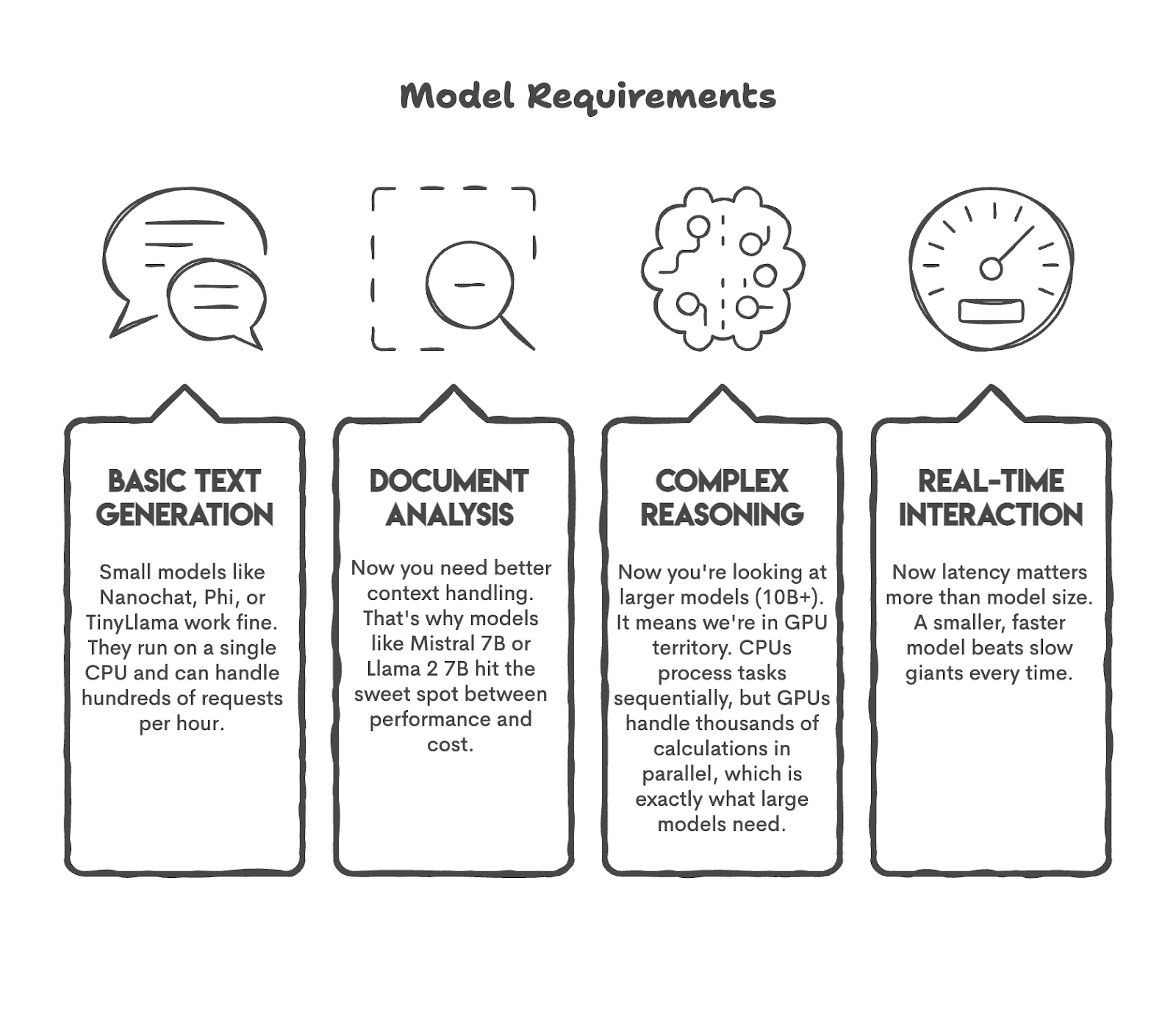

Before you release your model to the market, you probably have many questions in your mind. For example, what tasks do I expect my model to perform?

Let’s try to answer this question. If you need a bot for 50 users, you don’t need GPT-5. Or if you plan to perform sentiment analysis on over 1,200 tweets per day, you may not need a model with 50 billion parameters.

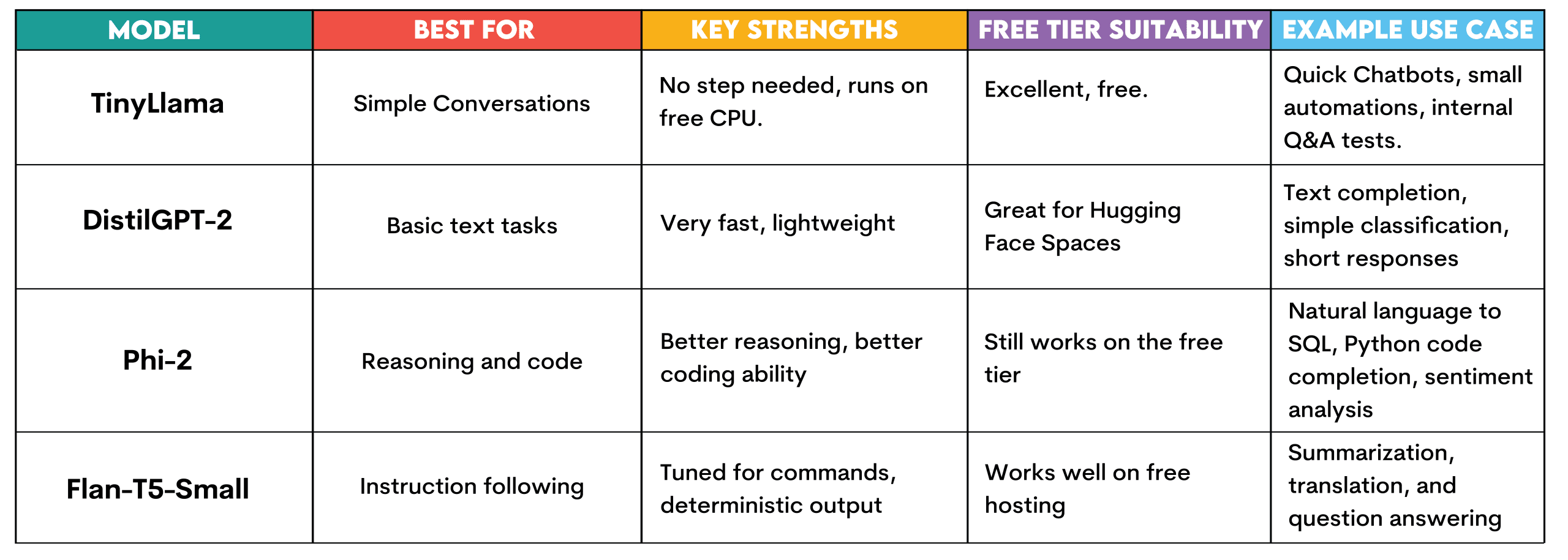

Let’s first look at some common apply cases and the models that can perform these tasks.

As you can see, we have adjusted the model to the task. This is what you should do before you start.

# Breakdown of the actual LLM hosting costs

Now that you know what you need, let me show you how much it costs. Hosting a model is not just about the model; it’s also about where the model works, how often it works, and how many people engage with it. Let’s decipher the actual costs.

// Calculations: the biggest cost you will incur

If you run Central Processing Unit (CPU) 24 hours a day, 7 days a week Amazon Online Services (AWS) EC2 which would cost around $36 per month. However, if you run the file Graphics processing unit (GPU), it will cost about $380 per month – more than 10 times as much. Therefore, you should be careful when calculating the cost of a huge language model as it is a major expense.

(Calculations are approximate; to see the actual price, check here: AWS EC2 pricing).

// Storage: little cost unless your model is huge

Let’s roughly calculate the amount of disk space. Model 7B (7 billion parameters) ranks approximately 14th Gigabytes (GB). Cloud storage expenses are approximately $0.023 per GB per month. So the difference between the 1GB model and the 14GB model is about $0.30 per month. Storage costs may be negligible if you do not plan to host a 300B parameter model.

// Bandwidth: Economical until you scale up

Bandwidth is crucial when your data moves, and when others apply your model, your data moves. AWS charges $0.09 per GB after the first GB, so you’re looking at pennies. But if you’re scaling to millions of requests, you should also calculate this carefully.

(Calculations are approximate; to see the actual price, check here: AWS data transfer prices).

// Free hosting options you can take advantage of today

Hugging facial space allows you to host petite CPU models for free. To give back AND Railroad they offer free tiers that work for low-traffic demos. If you experiment or build proof of concept, you can go quite far without spending a dime.

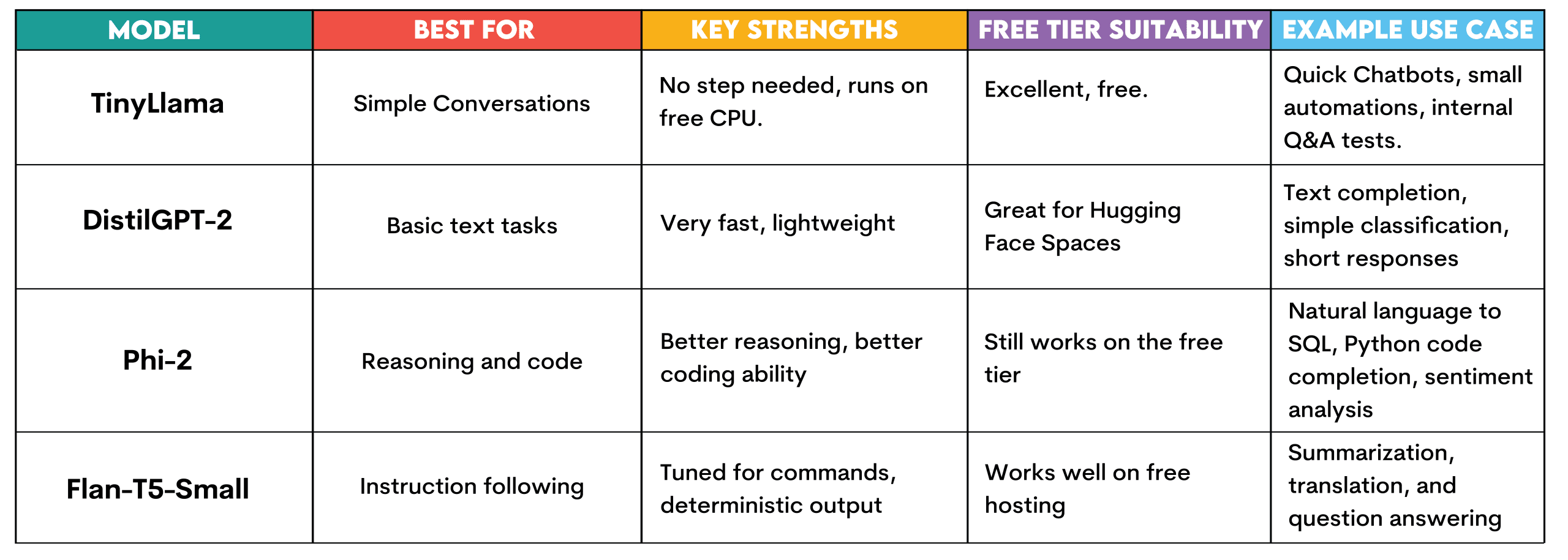

# Choose a model you can actually run

We already know the costs, but which model should we choose? Each model, of course, has its advantages and disadvantages. For example, if you download a 100-billion-parameter model onto your laptop, I guarantee it won’t run unless you have a top-of-the-line, purpose-built workstation.

Let’s see the different models available on Hugging Face so you can run them for free, as we will do in the next section.

LittleLama: This model requires no setup and runs on Hugging Face’s free CPU tier. It is intended for plain conversational tasks, answering plain questions and generating text.

You can apply it to quickly create and test chatbots, run quick automation experiments, or create internal question answering systems for testing before expanding to an infrastructure investment.

DistillGPT-2: It is also rapid and airy. This makes it perfect for face hugging. Suitable for text completion, very plain classification tasks or tiny answers. Suitable for understanding how LLMs function without resource constraints.

Phi-2: A little model developed by Microsoft that turns out to be quite effective. It still runs on the free Hugging Face tier, but offers improved reasoning and code generation. Exploit it to generate natural language to SQL queries, perform plain code completion in Python, or analyze customer feedback.

Flan-T5-Small: This is Google’s instruction tuning model. Created to respond to commands and provide answers. Useful to generate when you want to get deterministic results on free hosting, such as summary, translation or question answers.

# Set up TinyLlama in 5 minutes

Let’s build and deploy TinyLlama using Hugging Face Spaces for free. No credit card, no AWS account, no Docker problems. Just a working chatbot that you can share via link.

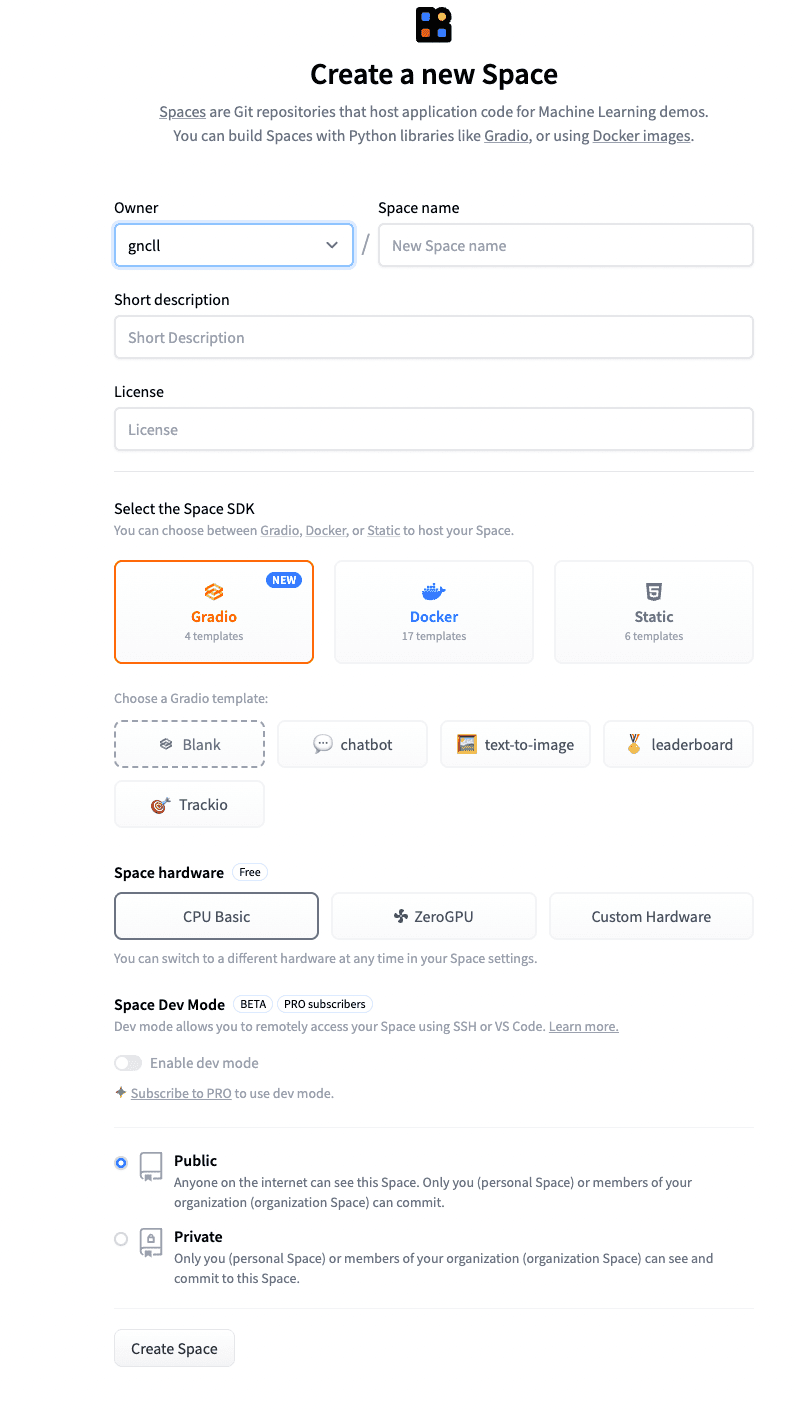

// Step 1: Go to Hug Face

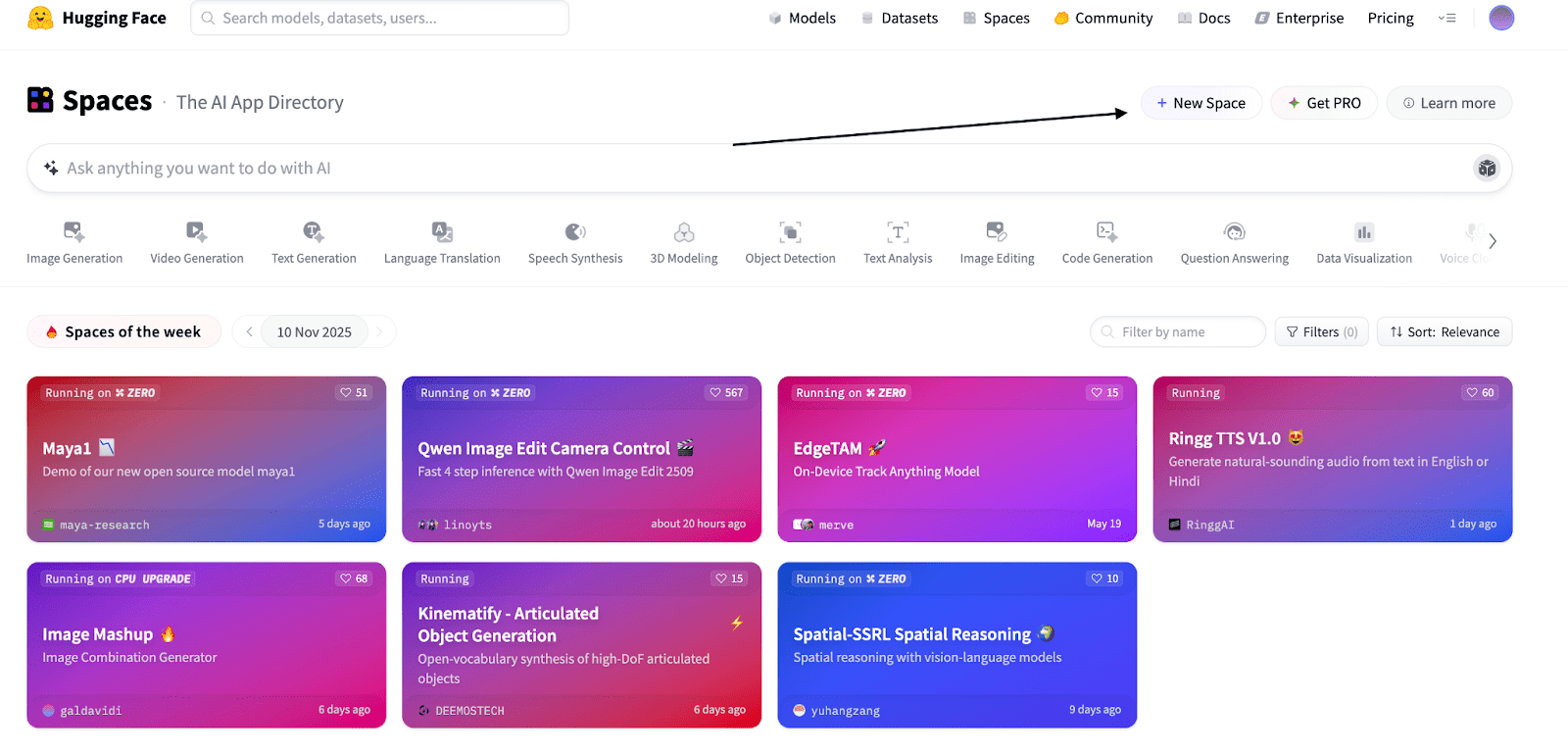

Go to huggingface.co/spaces and click “New Space” as in the screenshot below.

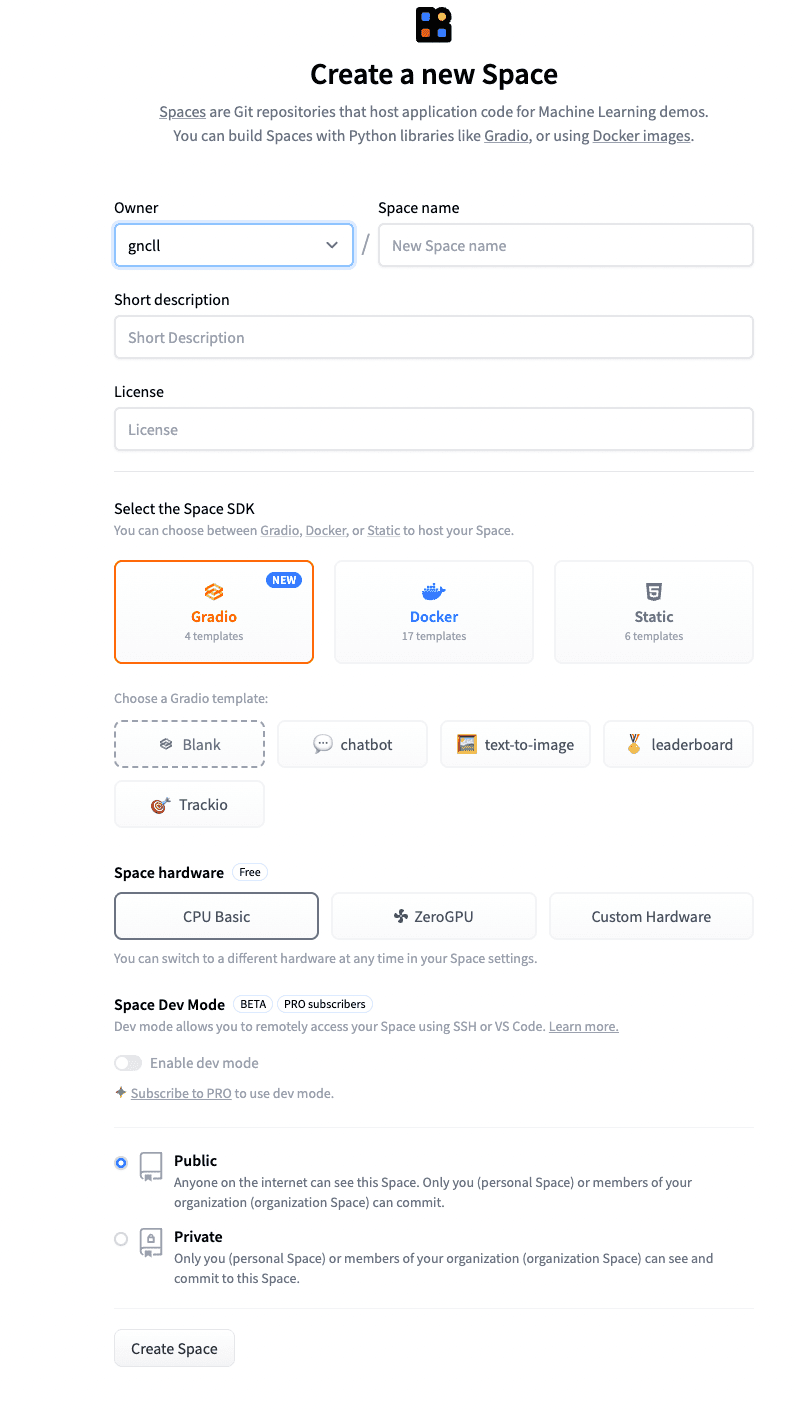

Name the space whatever you want and add a tiny description.

You can leave the remaining settings unchanged.

Click “Create Space.”

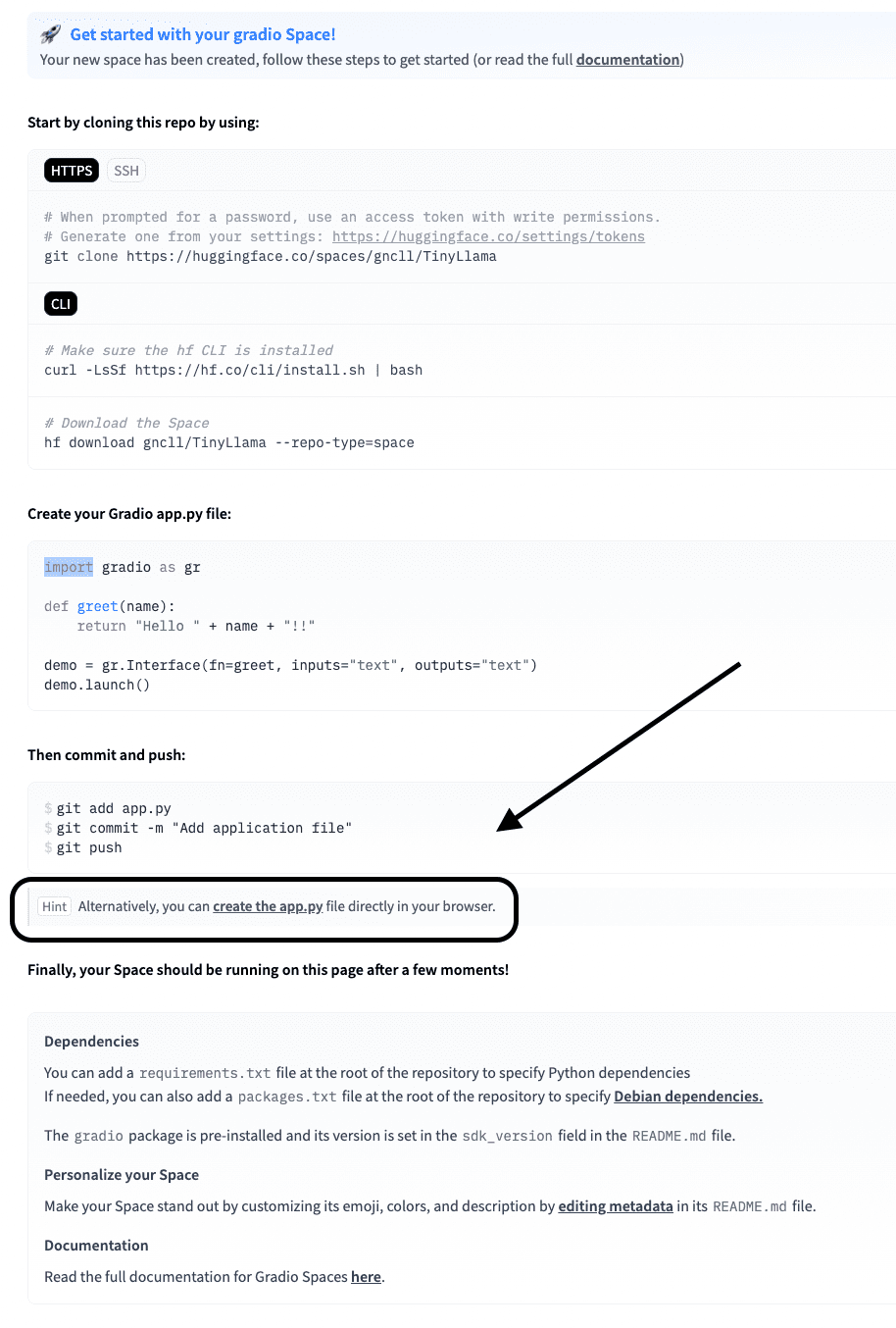

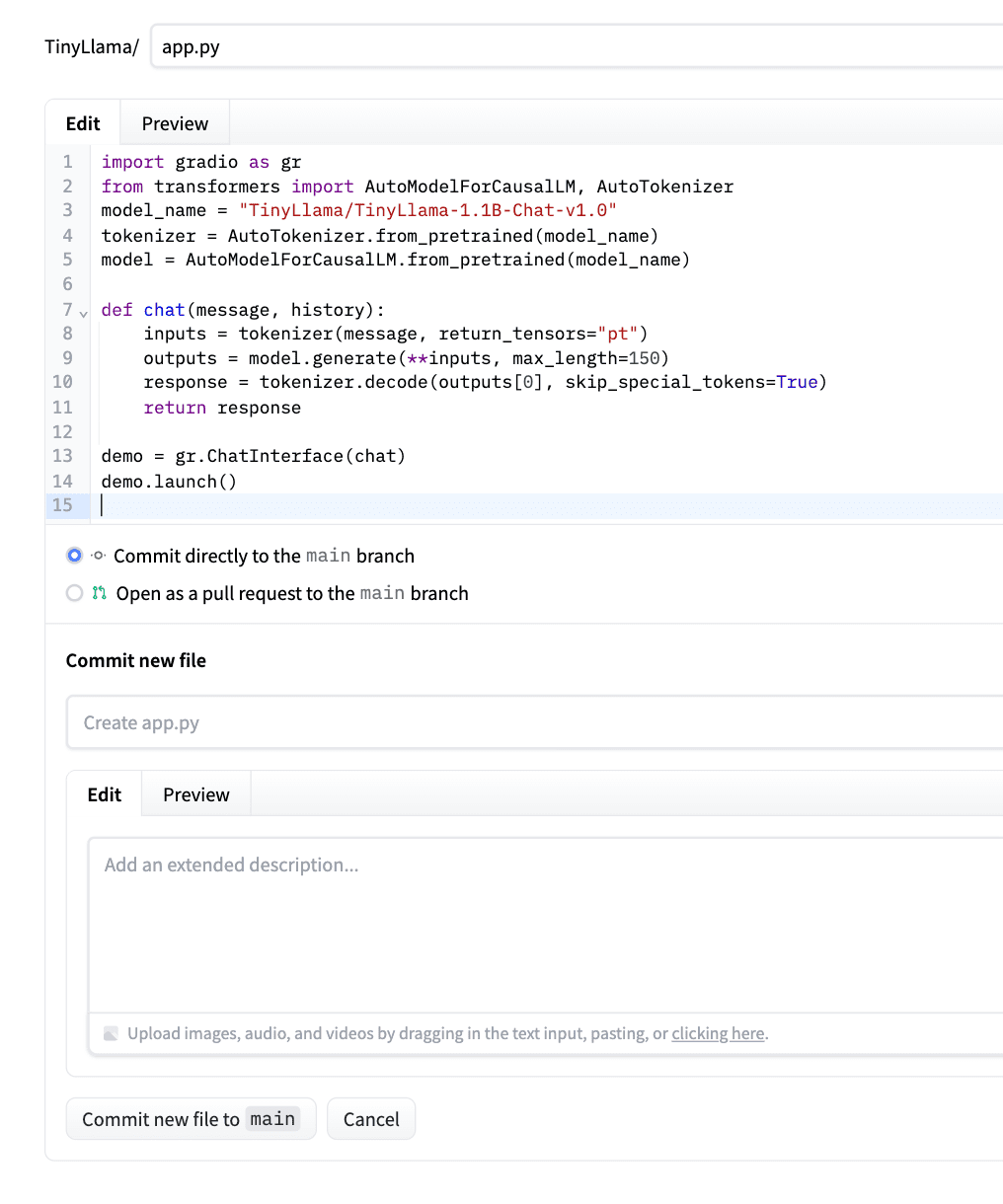

// Step 2: Write the app.py file

Now click “create app.py file” on the screen below.

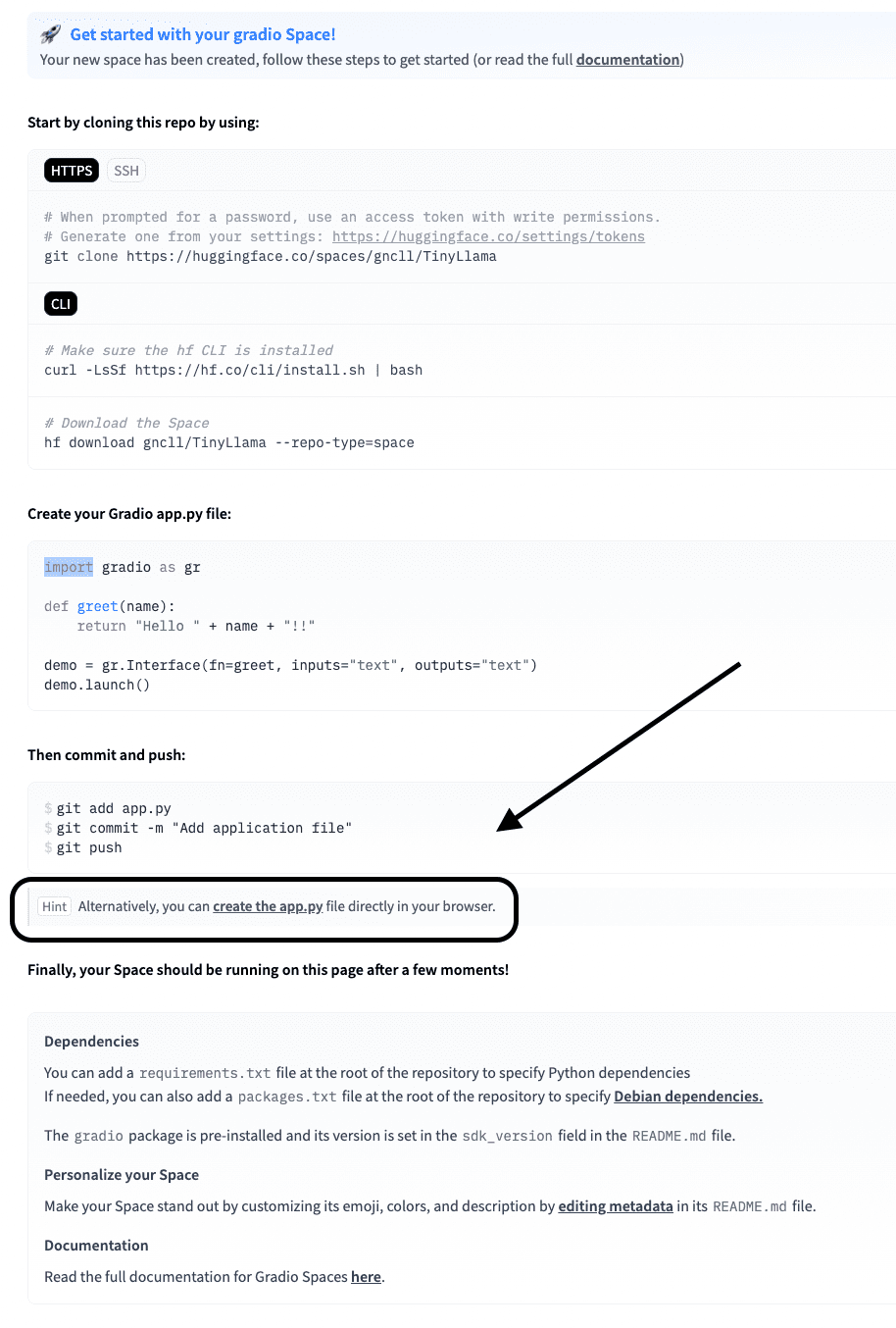

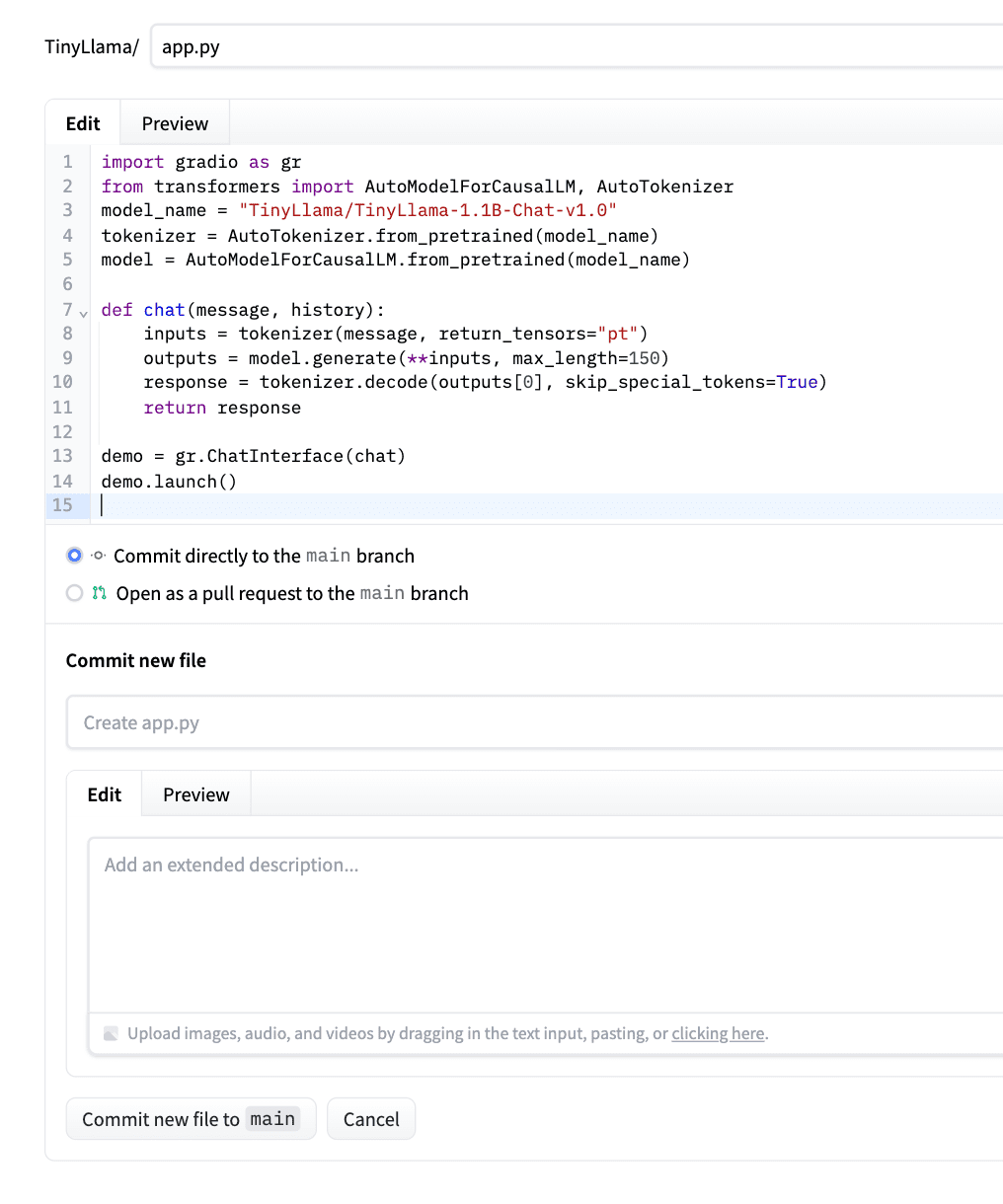

Paste the code below in the app.py file.

This code loads TinyLlama (with build files available on Hugging Face), wraps it in a chat function, and uses Built to create a web interface. The chat() the method correctly formats your message, generates a response (maximum 100 tokens) and returns only the answer from the model (does not take into account repetitions) to the question asked.

Here is a site where you can learn how to write code for any Hugging Face model.

Let’s see the code.

import gradio as gr

from transformers import AutoModelForCausalLM, AutoTokenizer

model_name = "TinyLlama/TinyLlama-1.1B-Chat-v1.0"

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoModelForCausalLM.from_pretrained(model_name)

def chat(message, history):

# Prepare the prompt in Chat format

prompt = f"<|user|>n{message}n<|assistant|>n"

inputs = tokenizer(prompt, return_tensors="pt")

outputs = model.generate(

**inputs,

max_new_tokens=100,

temperature=0.7,

do_sample=True,

pad_token_id=tokenizer.eos_token_id

)

response = tokenizer.decode(outputs[0][inputs['input_ids'].shape[1]:], skip_special_tokens=True)

return response

demo = gr.ChatInterface(chat)

demo.launch()After pasting the code, click “Commit new file to main file.” As an example, check the screenshot below.

Hugging Face will automatically detect this, install the dependencies, and deploy the application.

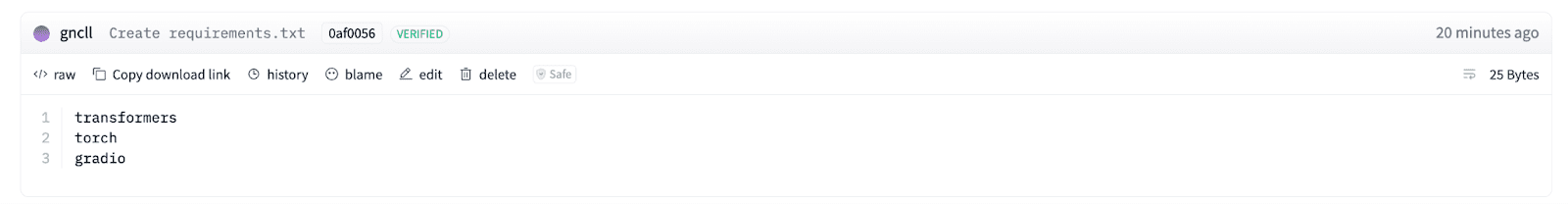

Meanwhile, create requirements.txt file, otherwise you will get an error like this.

// Step 3: Create a Requirements.txt file

Click “Files” in the upper right corner of the screen.

Here click on “Create New File” as below screenshot.

Name the file “requirements.txt” and add 3 Python libraries as shown in the screenshot below (transformers, torch, gradio).

Transformers here it loads the model and deals with tokenization. Flashlight runs the model because it provides the neural network engine. Gradio creates a plain web interface through which users can talk to the model.

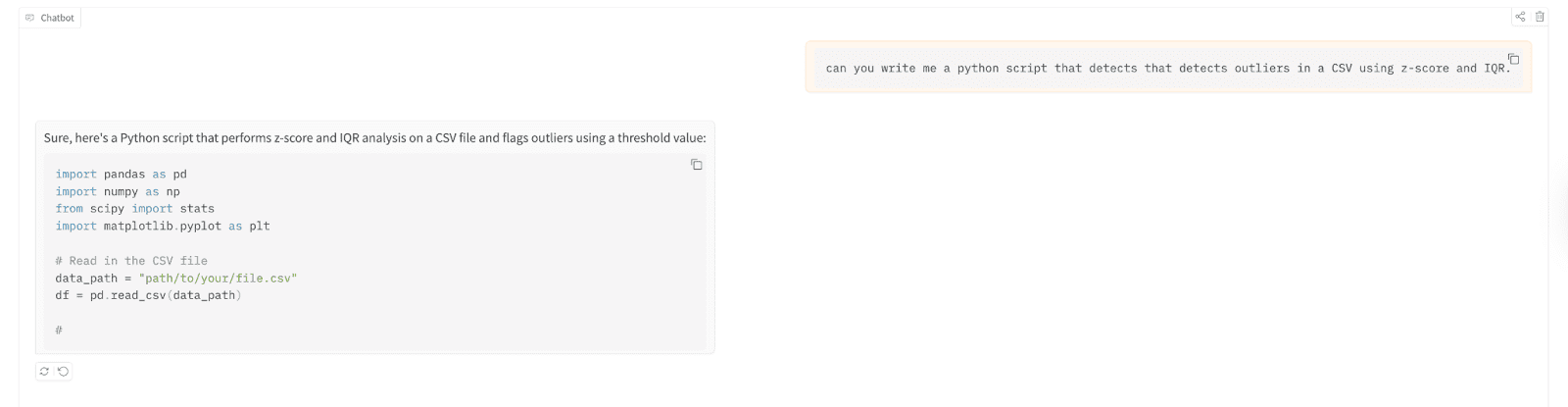

// Step 4: Run and test the deployed model

When you see the green “Running” airy, you’re done.

Now let’s test it.

You can test this by clicking on the app first here.

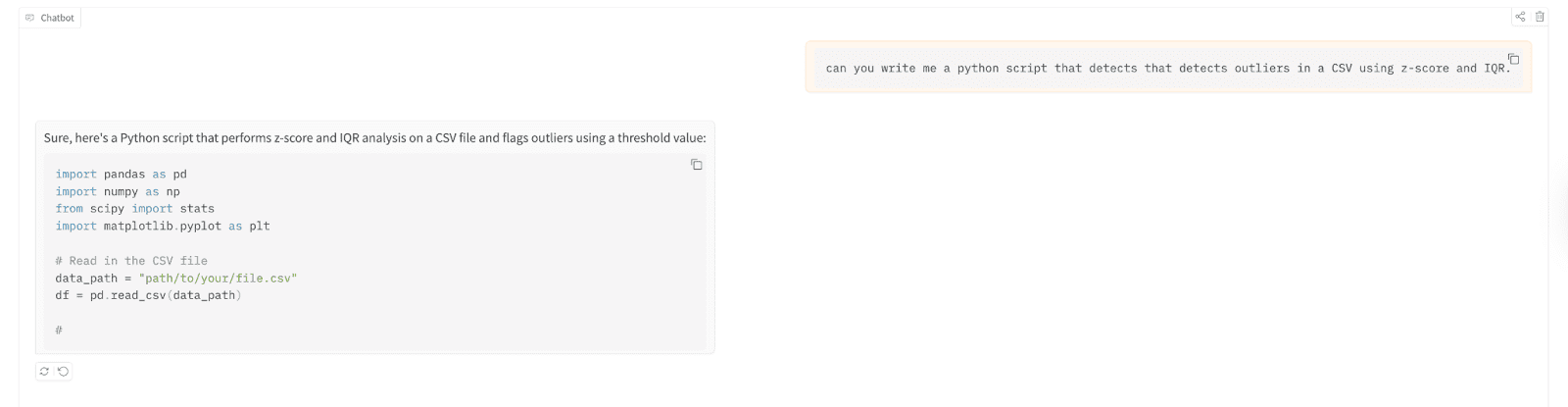

Let’s apply it to write a Python script that detects outliers in a file values separated by commas (CSV) using z-score and Interquartile range (IQR).

Here are the test results;

// Understanding the deployment you just created

As a result, you can now run the language model with 1B+ parameters and never have to touch a terminal, configure a server, or spend a dollar. Hugging Face takes care of the hosting, computing, and scaling (to a point). A paid tier is available for higher traffic. But for experimental purposes it is perfect.

The best way to learn? Implement first, optimize later.

# Where to go next: improving and extending the model

Now you have a working chatbot. But TinyLlama is just the beginning. If you need better answers, try upgrading to Phi-2 or Mistral 7B using the same process. Just change the model name to app.py and add a little more processing power.

For faster answers, look at quantization. You can also connect your model to a database, add memory for calls, or fine-tune it with your own data, so the only limit is your imagination.

Nate Rosidi is a data scientist and product strategist. He is also an adjunct professor of analytics and the founder of StrataScratch, a platform that helps data scientists prepare for job interviews using real interview questions from top companies. Nate writes about the latest career trends, gives interview advice, shares data science projects, and discusses all things SQL.