Photo by the author

As you dive into the world of data science and machine learning, one of the fundamental skills you’ll encounter is the art of reading data. If you’re already experienced in this, you’re probably familiar with JSON (JavaScript Object Notation), a popular format for both storing and exchanging data.

Think about how NoSQL databases like MongoDB love to store data in JSON format, or how REST APIs often respond in the same format.

However, the JSON format, while ideal for storage and exchange, is not fully ready for in-depth analysis in its raw form. This is where we transform it into something more analytically warm – a tabular format.

So whether you’re dealing with a single JSON object or a gorgeous array of them, in Python you’re essentially dealing with a dictionary or a list of dictionaries.

Let’s explore together how this transformation takes place so that our data is ready for analysis ????

Today I will explain a magic command that allows us to easily parse any JSON file into a tabular format in seconds.

And this is… p.s.json_normalize()

So let’s see how this works with different types of JSON.

The first type of JSON we can work with is single-level JSON with several keys and values. We define our first plain JSON files as follows:

Code by author

So let’s simulate the need to work with these JSON. We all know there isn’t much to do with their JSON format. We need to transform these JSON files into a readable and modifiable format… which means Pandas Dataframes!

1.1 Dealing with plain JSON structures

First we need to import the pandas library and then we can exploit the pd.json_normalize() command as follows:

import pandas as pd

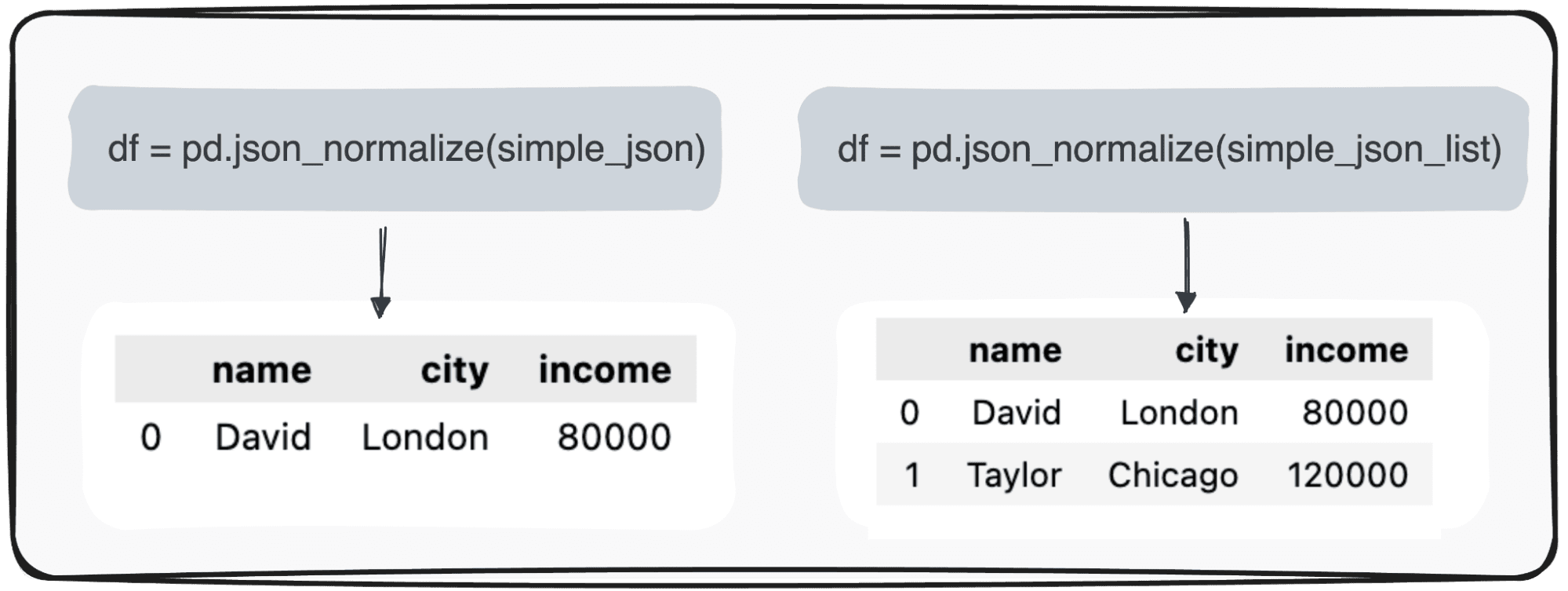

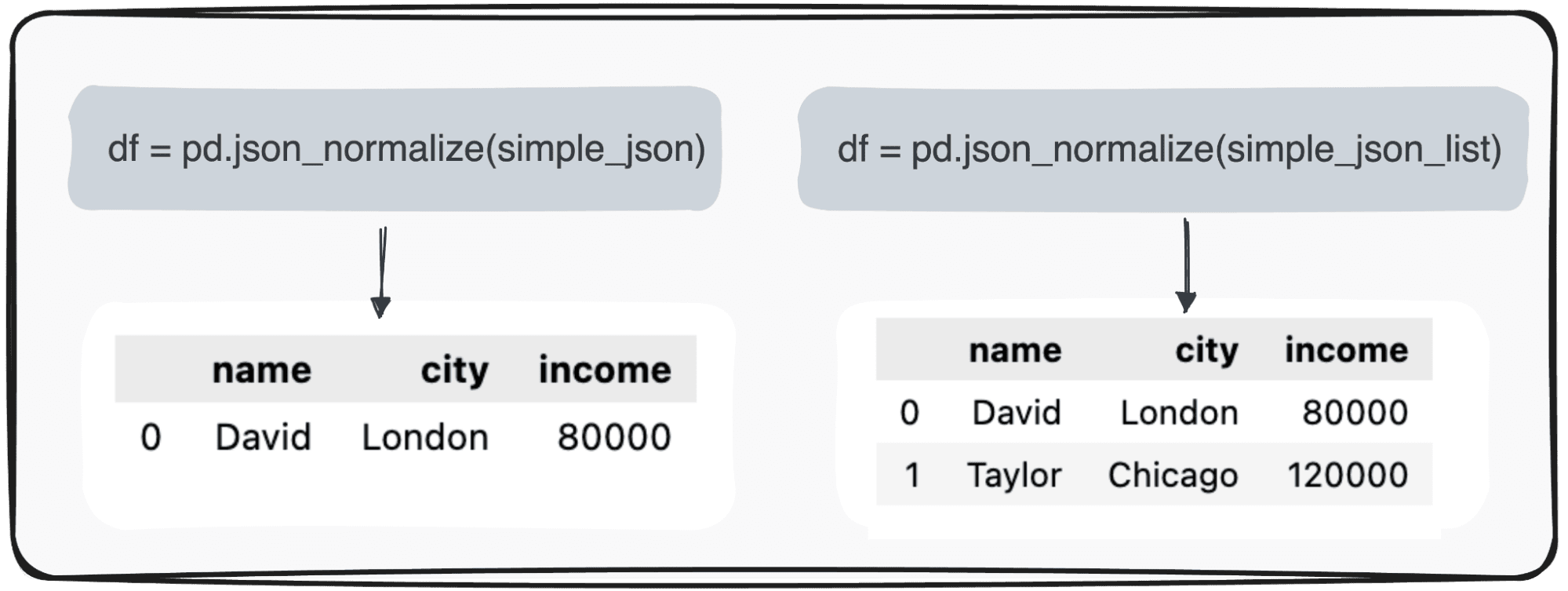

pd.json_normalize(json_string)Applying this command to single-record JSON produces the simplest table possible. However, when our data is a bit more convoluted and represents a JSON list, we can still exploit the same command without further complications and the output will correspond to a table with multiple records.

Photo by the author

Straightforward…right?

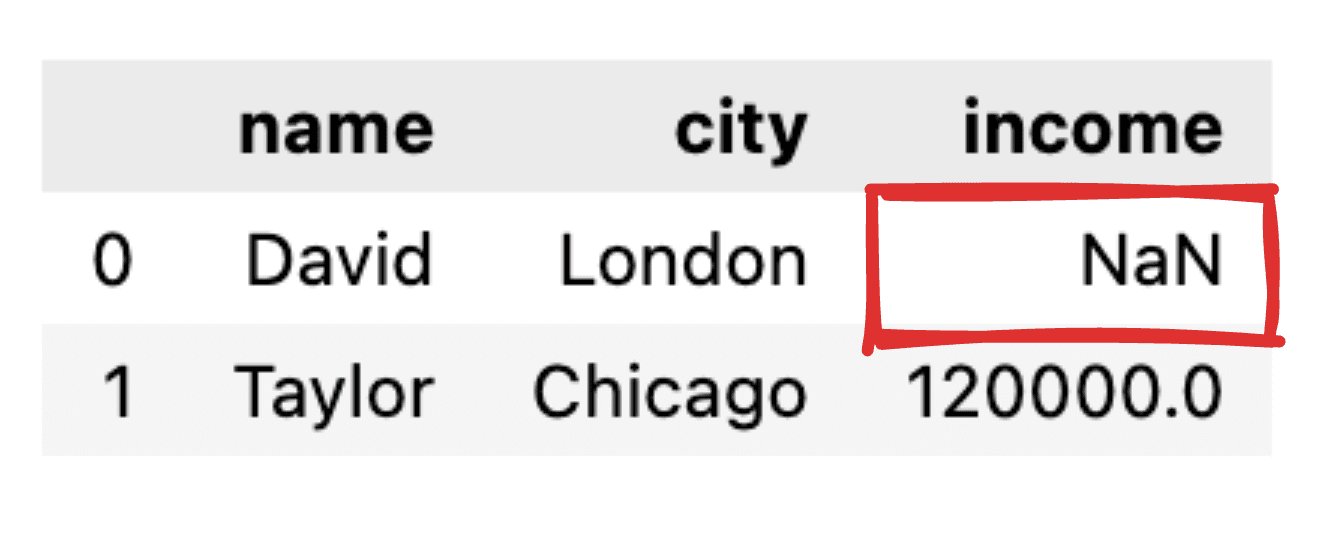

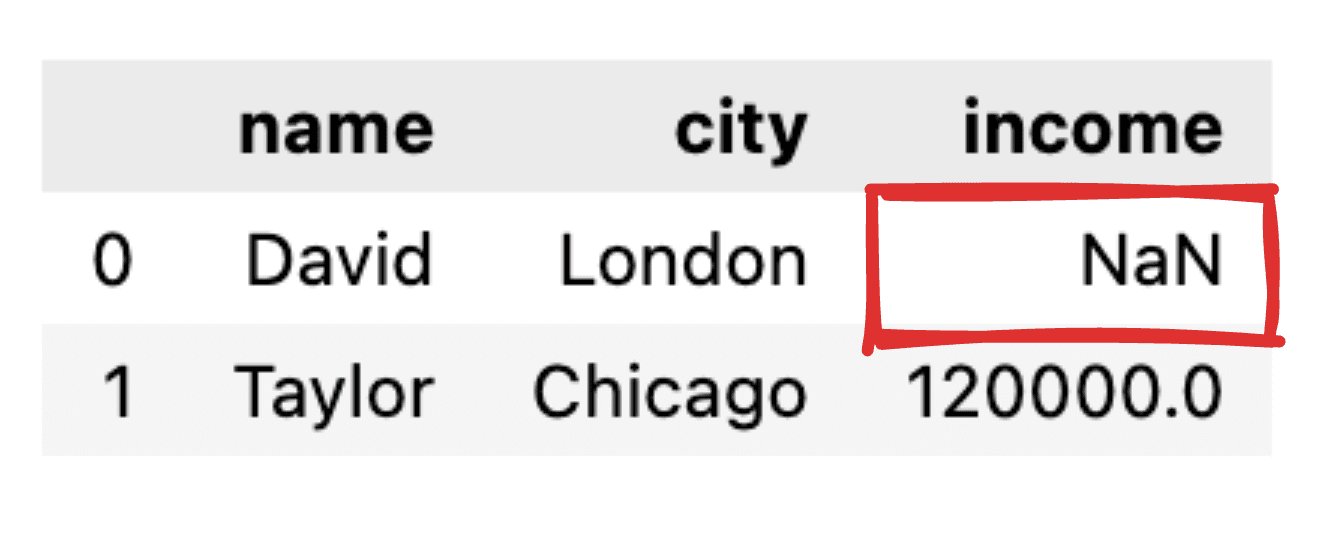

The next natural question is what happens when some values are missing.

1.2 Dealing with null values

Imagine that some values are not informed, for example the Income record for David is missing. When transforming our JSON into a plain pandas dataframe, the corresponding value will appear as NaN.

Image by author

What if I only want to get some of the fields?

1.3 Selecting only compelling columns

In case we just want to transform some specific fields into a pandas tabular dataframe, json_normalize() command does not allow us to select which fields to transform.

Therefore, you need to perform some minor pre-processing of the JSON, which will filter only the columns you are interested in.

# Fields to include

fields = ['name', 'city']

# Filter the JSON data

filtered_json_list = [{key: value for key, value in item.items() if key in fields} for item in simple_json_list]

pd.json_normalize(filtered_json_list)

So let’s move on to a more advanced JSON structure.

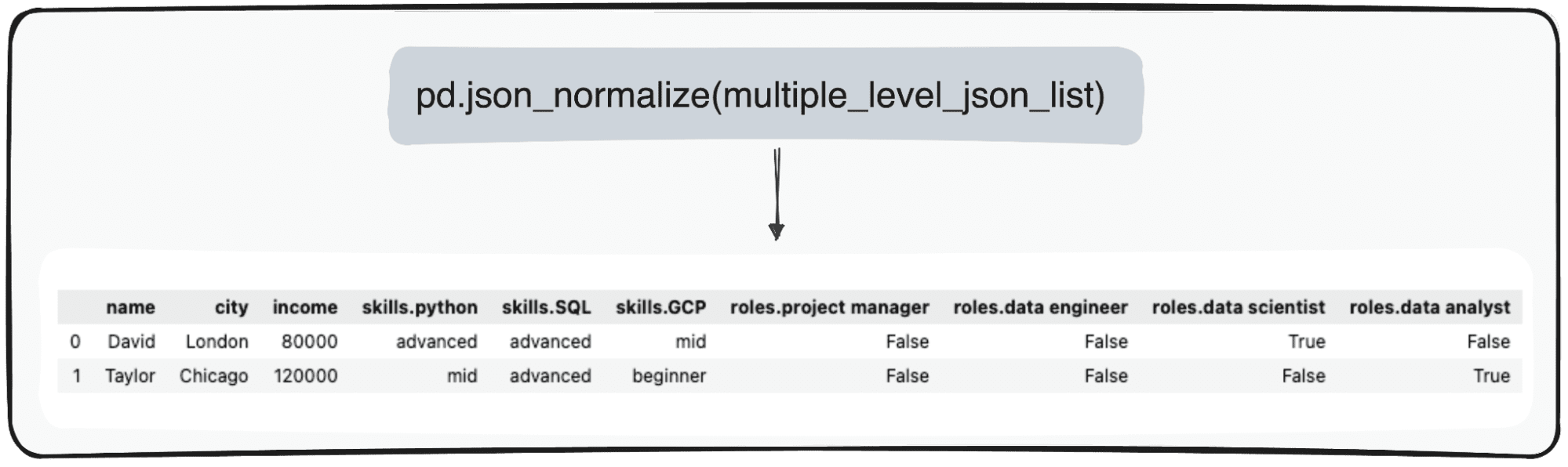

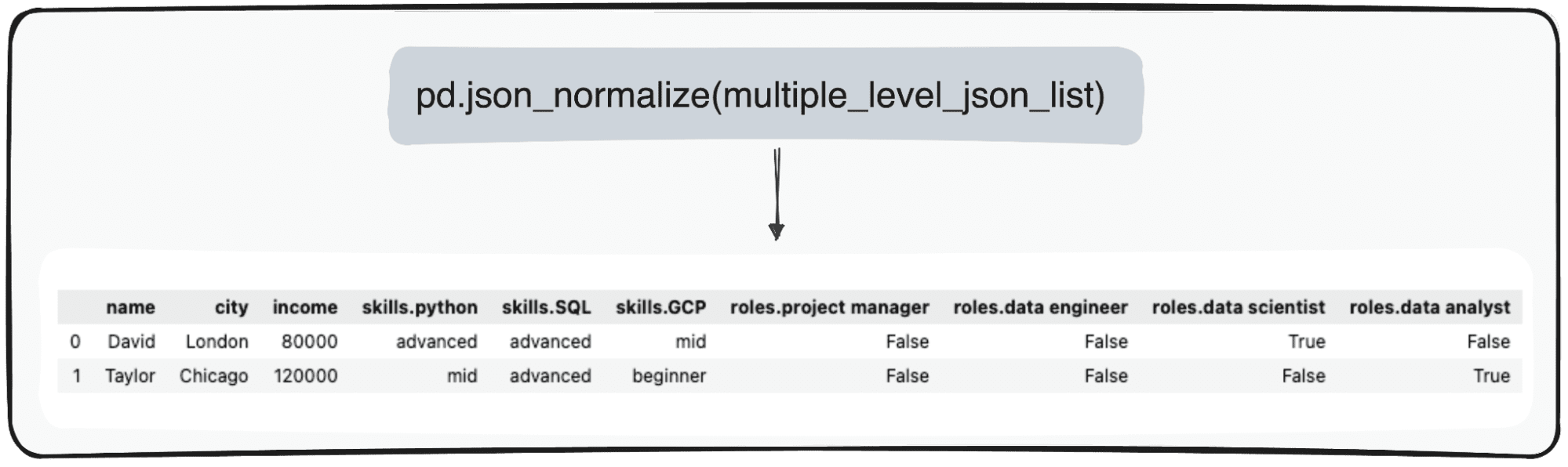

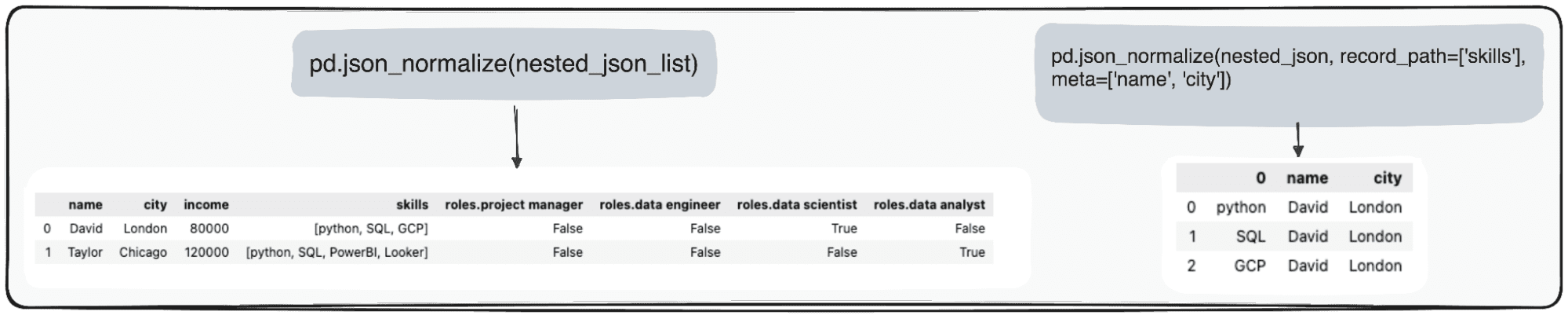

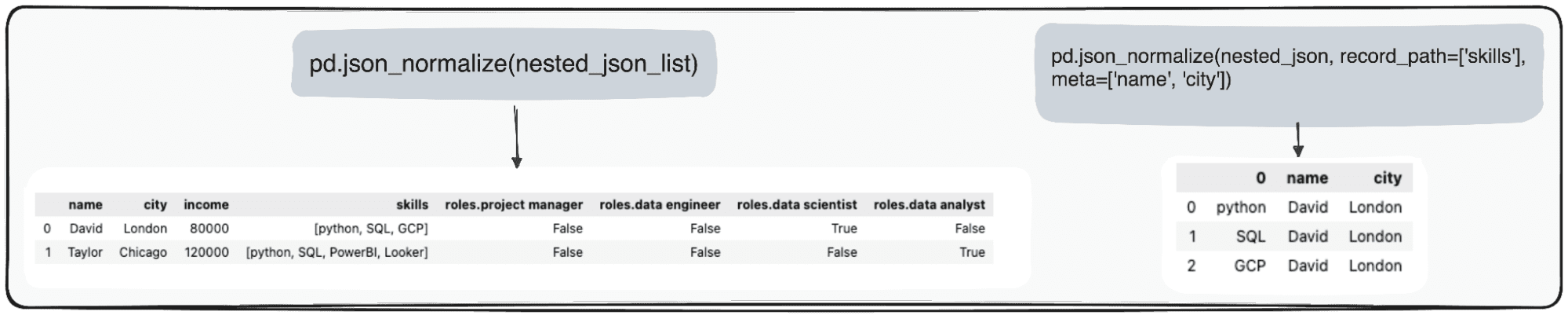

In the case of multi-level JSONs, we are dealing with nested JSONs at different levels. The procedure is the same as before, but in this case we can choose how many levels we want to transform. By default, the command always expands all levels and generates novel columns containing the concatenated names of all nested levels.

So if we normalize the following JSON files.

Code by author

We would get the following table with 3 columns under the field skills:

- skills.python

- skills.SQL

- skills.GCP

and 4 columns under field roles

- role.project manager

- role.data engineer

- role.data scientist

- role.data analyst

Image by author

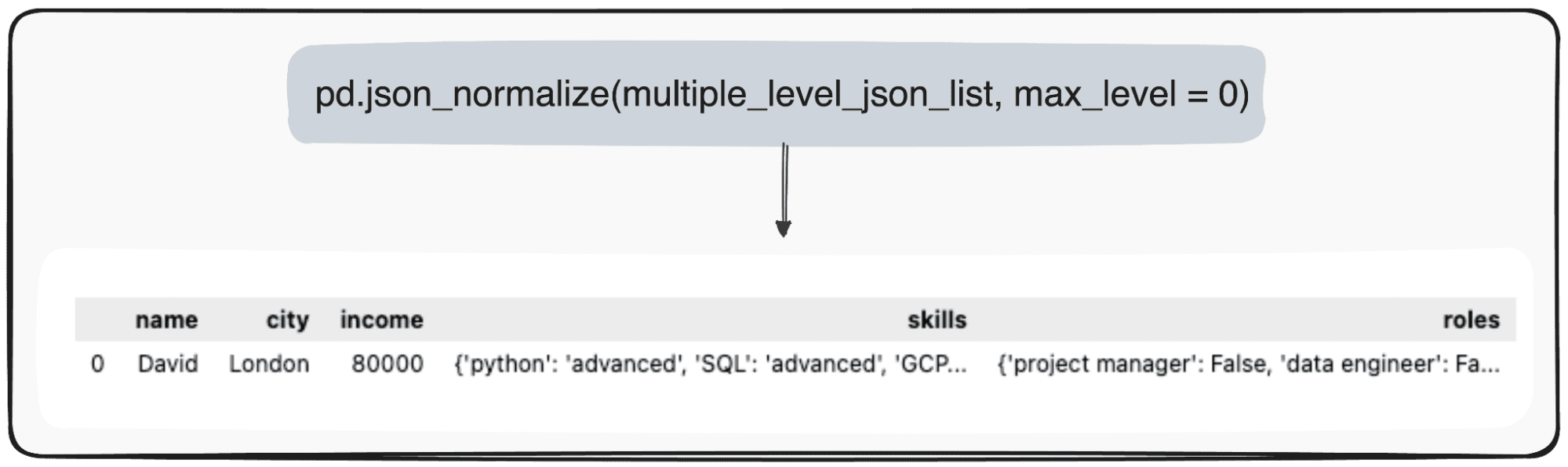

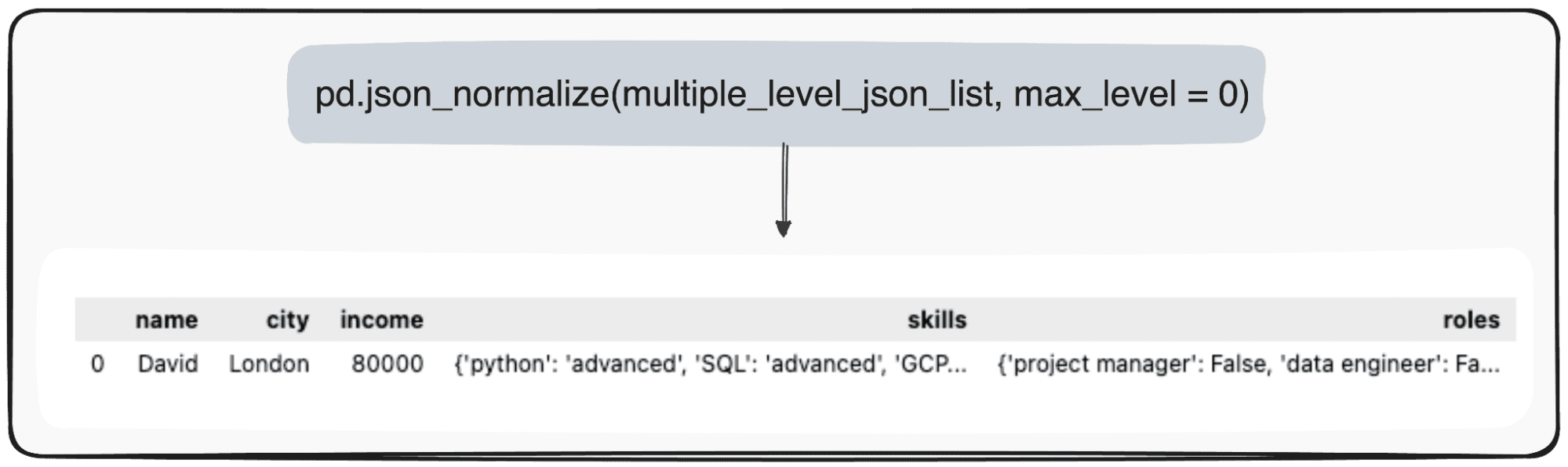

However, let’s imagine that we just want to transform our top level. We can do this by defining the max_level parameter to 0 (the max_level that we want to extend).

pd.json_normalize(mutliple_level_json_list, max_level = 0)Pending values will be persisted in JSON files in our pandas dataframe.

Photo by the author

The last case we can find is a nested list in a JSON field. So first we define our JSONs to exploit.

Code by author

We can effectively manage this data using Pandas in Python. The pd.json_normalize() function is particularly useful in this context. It can flatten JSON data, including nested list, into a structured format suitable for parsing. When this function is applied to our JSON data, it generates a normalized table that includes the nested list as part of its fields.

Moreover, Pandas offers the opportunity to further refine this process. Using the record_path parameter in pd.json_normalize(), we can direct the function to specifically normalize a nested list.

This action results in a separate table for the list content only. By default, this process will only expand the items in the list. However, to enrich this table with additional context, such as preserving the associated ID for each record, we can exploit the meta parameter.

Image by author

In summary, converting JSON data to CSV files using Python’s Pandas library is basic and proficient.

JSON is still the most popular format in current data storage and exchange, especially in NoSQL databases and REST APIs. However, it poses some crucial analytical challenges when processing data in its raw format.

The key role of the Pandas pd.json_normalize() function turns out to be a great way to handle such formats and convert our data to the Pandas DataFrame format.

I hope you found this guide useful and that the next time you deal with JSON, you will be able to do it more efficiently.

You can check the corresponding Jupyter notebook in according to the GitHub repository.

Józef Ferrer is an analytics engineer from Barcelona. He graduated in engineering physics and currently works in the field of data science applied to human mobility. He is a part-time content creator focusing on data science and technology. Josep writes about all things AI, discussing the application of the ongoing explosion in the field.