Photo by the author

# Entry

Most data scientists are learning pandas by reading tutorials and copying working patterns.

This is a good solution to start with, but it often causes beginners to develop bad habits. Exploit iterrows() loops, intermediate variable assignments and repeatables merge() calls are some examples of code that is technically exact, but slower than necessary and harder to read than it should be.

The following patterns are not edge cases. They cover the most common everyday operations in data science, such as filtering, transforming, joining, grouping, and calculating conditional columns.

In each of them, there is a common approach and a better approach, and the distinction is usually one of awareness, not complexity.

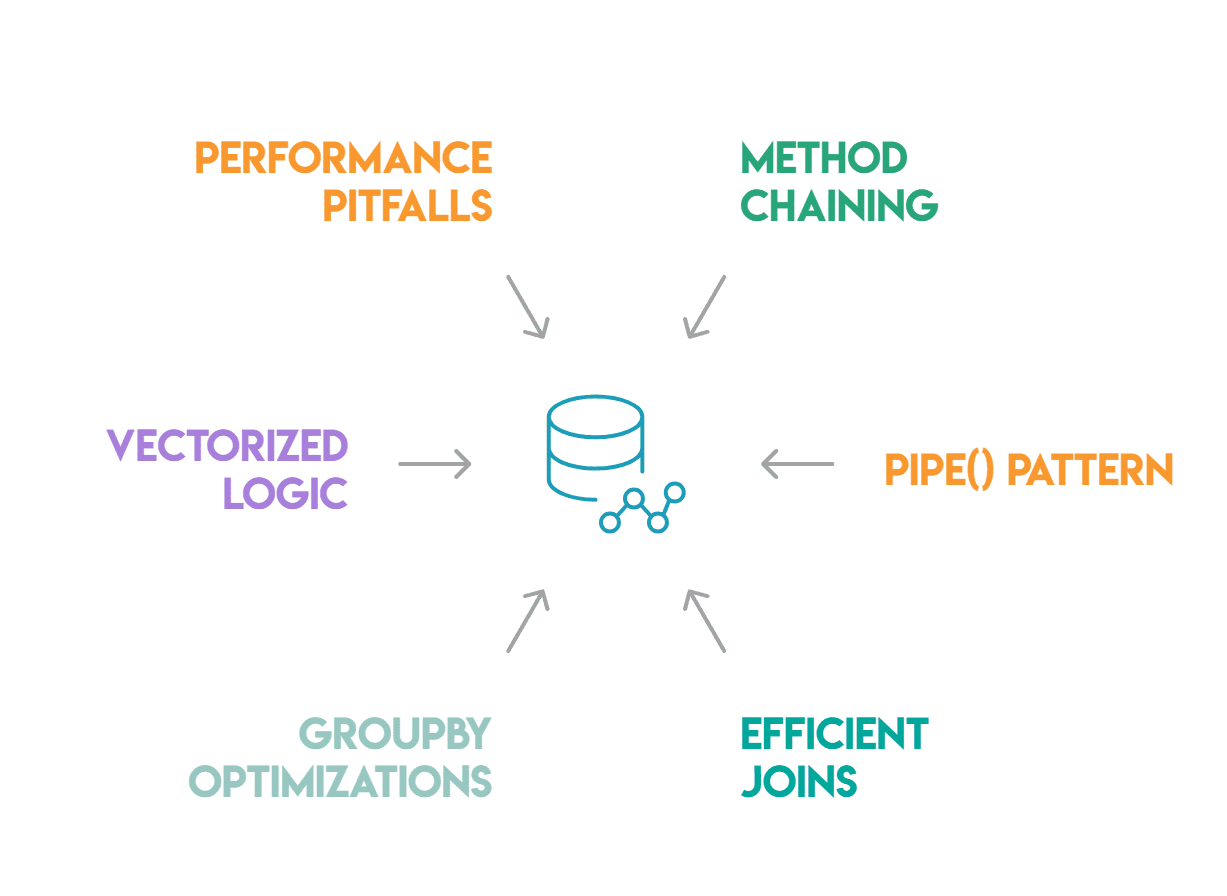

These six have the greatest impact: combining methods, pipe() pattern, capable joins and merges, clustering optimizations, vectorized conditional logic, and performance pitfalls.

# Chain of methods

Indirect variables can make your code more organized, but they often just add noise. Chain of methods lets you write a sequence of transformations as a single expression that reads naturally and avoids naming objects that don’t require unique identifiers.

Instead:

df1 = df[df['status'] == 'dynamic']

df2 = df1.dropna(subset=['revenue'])

df3 = df2.assign(revenue_k=df2['revenue'] / 1000)

result = df3.sort_values('revenue_k', ascending=False)You write this:

result = (

df

.query("status == 'active'")

.dropna(subset=['revenue'])

.assign(revenue_k=lambda x: x['revenue'] / 1000)

.sort_values('revenue_k', ascending=False)

)Lambda in assign() is significant here.

While linking, the current file status DataFrame cannot be accessed by name; you have to operate lambda to refer to it. The most common cause of broken chains is forgetting, which usually results in: NameError or an invalid reference to a variable that was defined earlier in the script.

Another mistake to be aware of is usage inplace=True inside the chain. Methods from inplace=True return Nonewhich immediately breaks the chain. When writing string code, in-place operations should be avoided because they provide no memory benefits and make the code hard to track.

# Pipe() pattern.

When one of your transformations is intricate enough to deserve its own function, using pipe() allows you to keep it inside the chain.

pipe() passes DataFrame as the first argument of any call:

def normalize_columns(df, cols):

df[cols] = (df[cols] - df[cols].mean()) / df[cols].std()

return df

result = (

df

.query("status == 'active'")

.pipe(normalize_columns, cols=['revenue', 'sessions'])

.sort_values('revenue', ascending=False)

)This allows you to keep intricate transformation logic within a named, testable function while preserving the chain. Each pipelined function can be tested individually, which becomes a challenge when the logic is hidden in an extensive chain.

Practical value pipe() goes beyond appearance. Dividing the processing pipeline into labeled functions and combining them pipe() enables self-documentation of the code. Anyone who reads the sequence can understand each step from the function name without having to analyze the implementation.

It also makes it easier to swap or skip steps when debugging: if you comment out one pipe() call, the rest of the chain will continue to function smoothly.

# Productive combining and merging

One of the most overused features of pandas is to combine(). The two most common errors are many-to-many joins and noiseless row inflation.

If both dataframes have duplicate values in the join key, merge() performs the Cartesian product of these rows. For example, if the join key is not unique on at least one side, joining the 500-row “users” table with the “events” table could result in millions of rows.

This does not cause an error; it just produces DataFrame which seems correct but is larger than expected until you check its shape.

The solution is validate parameter:

df.merge(other, on='user_id', validate="many_to_one")It elevates MergeError immediately if the many-to-one assumption is violated. Exploit “one_to_one”, “one_to_many” or “many_to_one” depending on what you want from the linking.

The indicator=True parameter is equally useful for debugging:

result = df.merge(other, on='user_id', how='left', indicator=True)

result['_merge'].value_counts()This parameter adds _merge a column showing whether each row comes from ‘only_left’, ‘only_right’, or ‘both’. This is the fastest way to catch rows that didn’t match when you expected them to match.

In cases where both data frames share a common index, append() is faster than merge() because it works directly on the index instead of searching a specific column.

# Group optimizations

When using A GroupByone of the rarely used methods is transform(). The difference between agg() AND transform() depends on what shape you want to recover.

The agg() method. returns one row per group. On the other hand, transform() restores the same shape as the original DataFramewith each row filled with the aggregated value of a given group. This makes it ideal for adding group-level statistics as modern columns without having to merge them later. It’s also faster than the manual approach to aggregating and merging because pandas doesn’t have to align two data frames after the fact:

df['avg_revenue_by_segment'] = df.groupby('segment')['revenue'].transform('mean')This will directly add the average revenue for each segment to each row. Same result with agg() would require calculating the average and then reconnecting based on the segment key, using two steps instead of one.

For category grouping columns, always operate observed=True: :

df.groupby('segment', observed=True)['revenue'].sum()Without this argument, pandas calculates results for each category defined in the dtype column, including combinations that do not appear in the actual data. For gigantic data frames with many categories, this results in empty groups and unnecessary computations.

# Vectorized conditional logic

Using apply() With lambda function per-row is the least capable way to calculate conditional values. It avoids the C-level operations that speed up pandas by running the Python function independently on each line.

For binary conditions NumPy‘S np.where() is a direct replacement for:

df['label'] = np.where(df['revenue'] > 1000, 'high', 'low')For many conditions np.select() deals with them cleanly:

conditions = [

df['revenue'] > 10000,

df['revenue'] > 1000,

df['revenue'] > 100,

]

choices = ['enterprise', 'mid-market', 'small']

df['segment'] = np.select(conditions, choices, default="micro")The np.select() the function directly maps the if/elif/else structure at vector speed, evaluating the conditions in order and assigning the first matching option. This is typically 50 to 100 times faster than the equivalent apply() on DataFrame with a million lines.

In the case of numerical categorization, the conditional assignment is completely replaced by pd.cut() (containers of equal width) i pd.qcut() (quantile-based bins) that automatically return a categorical column without the need to operate NumPy. Pandas takes care of everything, including labeling and boundary value handling when you pass the number of bins or the edges of the bins.

# Performance pitfalls

Some common patterns leisurely down pandas code more than anything else.

For example, iterrows() repeats DataFrame rows as (index, Series) pairs. This is an intuitive but leisurely approach. For DataFrame at 100,000 rows this function call can be 100 times slower than its vector counterpart.

The lack of efficiency comes from building a complete system Series object for each line and executing the Python code on it one by one. As soon as you notice yourself writing for _, row in df.iterrows()stop and think if np.where(), np.select()or a grouping operation can replace it. In most cases, one of them can do it.

Using apply(axis=1) is faster than iterrows() but it has the same problem: python-level execution for each line. For any operation that can be represented using NumPy’s or pandas’ built-in functions, the built-in method is always faster.

Object type columns are also an simple to miss source of slowness. When pandas stores strings as an object type d, operations on these columns are performed in Python rather than C. For low-cardinality columns such as status codes, region names, or categories, converting them to a categorical type can significantly speed up grouping and value_counts().

df['status'] = df['status'].astype('category')Finally, avoid string assignments. Using df[df['revenue'] > 0]['label'] = 'positive' he could change the initial DataFramedepending on whether pandas generated the copy behind the scenes. The behavior is undefined. To operate .loc instead next to the logical mask:

df.loc[df['revenue'] > 0, 'label'] = 'positive'It is unambiguous and awakens no SettingWithCopyWarning.

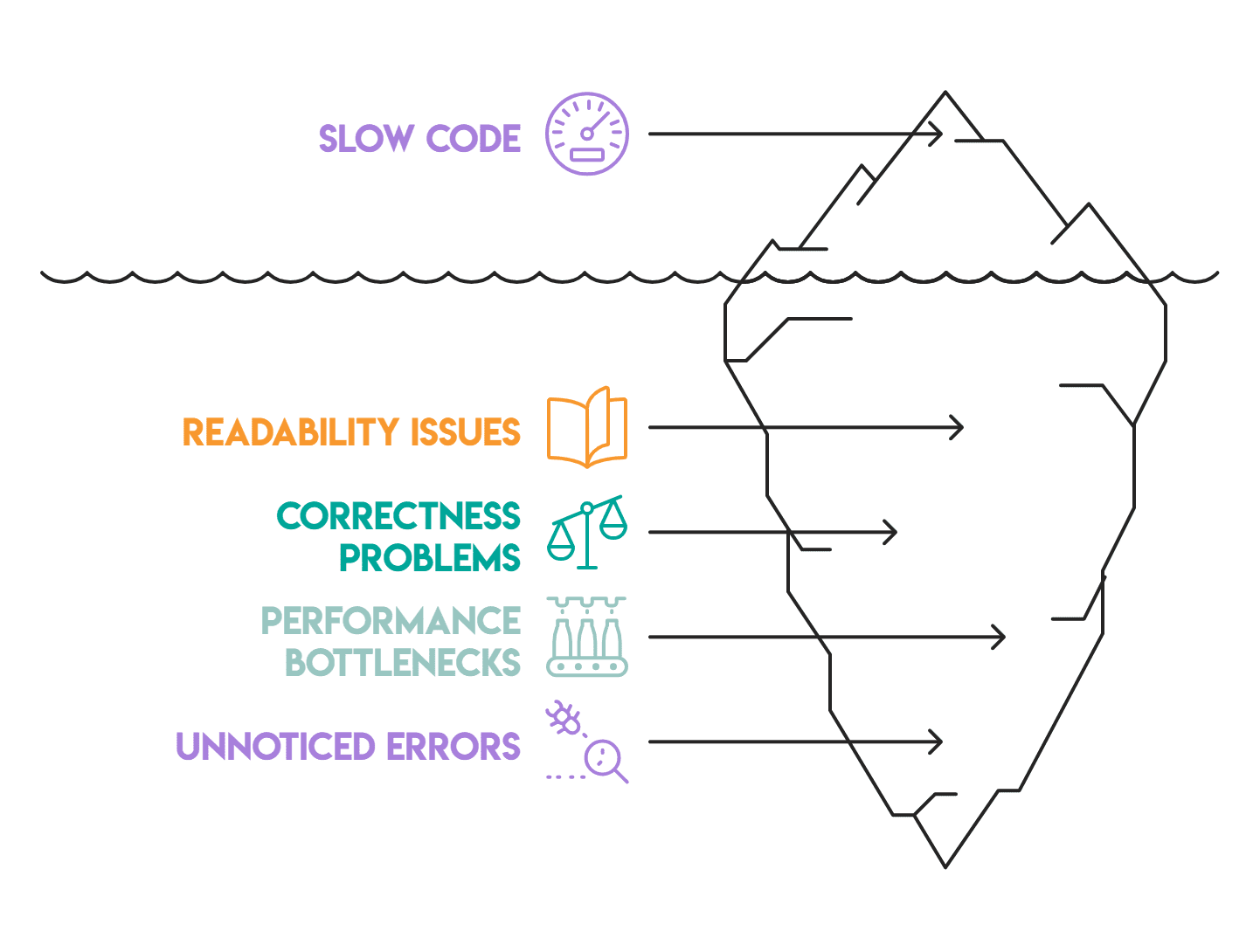

# Application

These patterns distinguish code that works from code that works well: it is capable enough to operate on real data, readable enough to maintain, and organized in a way that makes it simple to test.

Chain of methods i pipe() address readability, while linking and grouping patterns address correctness and efficiency. Vectorization logic and trap section address speed.

Most of the pandas code we review has at least two or three of these problems. They build up silently – there’s a leisurely loop here, an unacknowledged connection there, or an object type column that no one noticed. None of them cause obvious failures, so they persist. A sharp way to start is to repair them one at a time.

Nate Rosidi is a data scientist and product strategist. He is also an adjunct professor of analytics and the founder of StrataScratch, a platform that helps data scientists prepare for job interviews using real interview questions from top companies. Nate writes about the latest career trends, gives interview advice, shares data science projects, and discusses all things SQL.