Photo by the author

# Entry

AI image editing has advanced rapidly. Tools like ChatGPT and Gemini have shown how powerful AI can be in imaginative work, leaving many wondering how it will change the future of graphic design. At the same time, open source image editing models are rapidly improving and closing the quality gap.

These models allow you to edit images with elementary text prompts. With minimal effort, you can remove backgrounds, replace objects, enhance photos and add artistic effects. What once required advanced design skills can now be done in just a few steps.

In this blog, we review five open-source AI models that excel at image editing. You can run them locally, operate them via an API, or access them directly in your browser, depending on your workflow and needs.

# 1. STREAM.2 [klein] 9B

STREAM.2 [klein] is a high-performance open source image generation and editing model designed for speed, quality and flexibility. Developed by Black Forest Labs, it combines image generation and editing into one compact architecture, enabling end-to-end inference in less than a second on consumer hardware.

FLUX.2 [klein] The 9B Base is a full-capacity, undistilled base model that supports text-to-image and multi-reference image editing, making it perfect for researchers, developers and creators who want fine-grained control over results rather than relying on heavily distilled pipelines.

Key Features:

- Unified generation and editing: It supports text-to-image and image editing tasks within a single model architecture.

- Undistilled foundation model: Maintains the full training signal, offering greater flexibility, control and output variety.

- Multiple reference editing support: Allows you to edit an image based on multiple reference images for more precise results.

- Optimized for real-time operate: It delivers state-of-the-art quality with very low latency, even on consumer GPUs.

- Open weights and ready to tune: Designed for training, research and custom LoRA pipelines, with compatibility with tools such as Diffusers and ComfyUI.

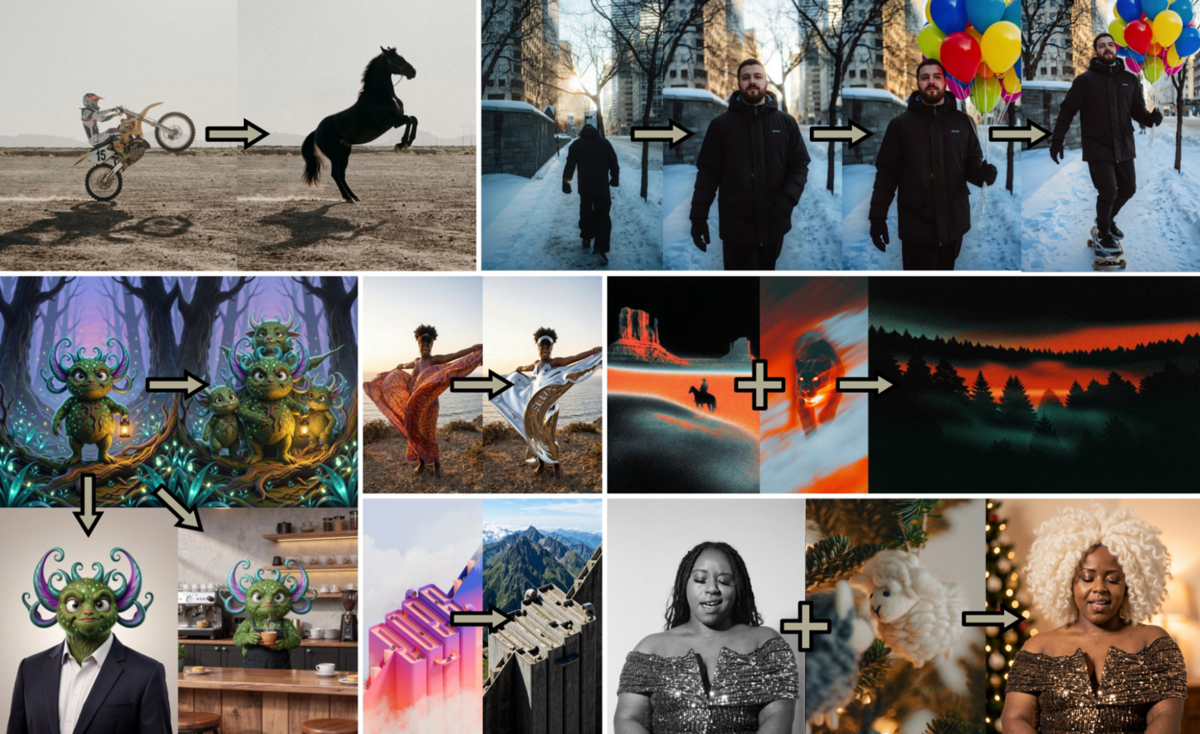

# 2.Qwen-Image-Edit-2511

Qwen-Image-Edit-2511 is an advanced open source image editing model focused on high consistency and precision. Developed by Alibaba Cloud as part of the Qwen family of models, it is based on Qwen-Image-Edit-2509 with significant improvements in image stability, character consistency and structural accuracy.

The model is designed for sophisticated image editing tasks such as multiplayer editing, industrial design workflows, and geometry-aware transformations, while being basic to integrate using diffusers and browser-based tools such as Qwen Chat.

Key Features:

- Improved image and character consistency: Reduces image drift and preserves identity during single and multiplayer edits.

- Editing multiple images and multiple people: Enables high-quality combination of multiple reference images into a consistent final result.

- Built-in LoRA integration: Includes community-created LoRA directly in the base model, unlocking advanced effects without additional configuration.

- Industrial design and engineering support: Optimized for product design tasks such as material replacement, batch design, and structural changes.

- Improved Geometric Reasoning: Supports geometry-aware editing, including construction lines and design annotations for technical applications.

# 3. STREAM.2 [dev] Turbo

STREAM.2 [dev] Turbo is a lightweight, speedy image generation and editing adapter designed to dramatically reduce inference time without sacrificing quality.

Built as a distilled LoRA adapter for FLUX.2 [dev] The basic model developed by Black Forest Labs enables high-quality results in just eight inference steps. This makes it an excellent choice for real-time applications, rapid prototyping, and interactive image workflows where speed is crucial.

Key Features:

- Ultra-fast inference in 8 steps: Achieves up to six times faster generation compared to a standard 50-step workflow.

- Quality preserved: Matches or exceeds the visual quality of the original FLUX.2 [dev] model despite ponderous distillation.

- LoRA based adapter: Lightweight and basic to connect to existing FLUX.2 pipelines with minimal overhead.

- Text-to-image and image editing support: It works for both generating and editing tasks in one configuration.

- Extensive ecosystem support: Available via hosted APIs, diffusers and ComfyUI for pliant deployment options.

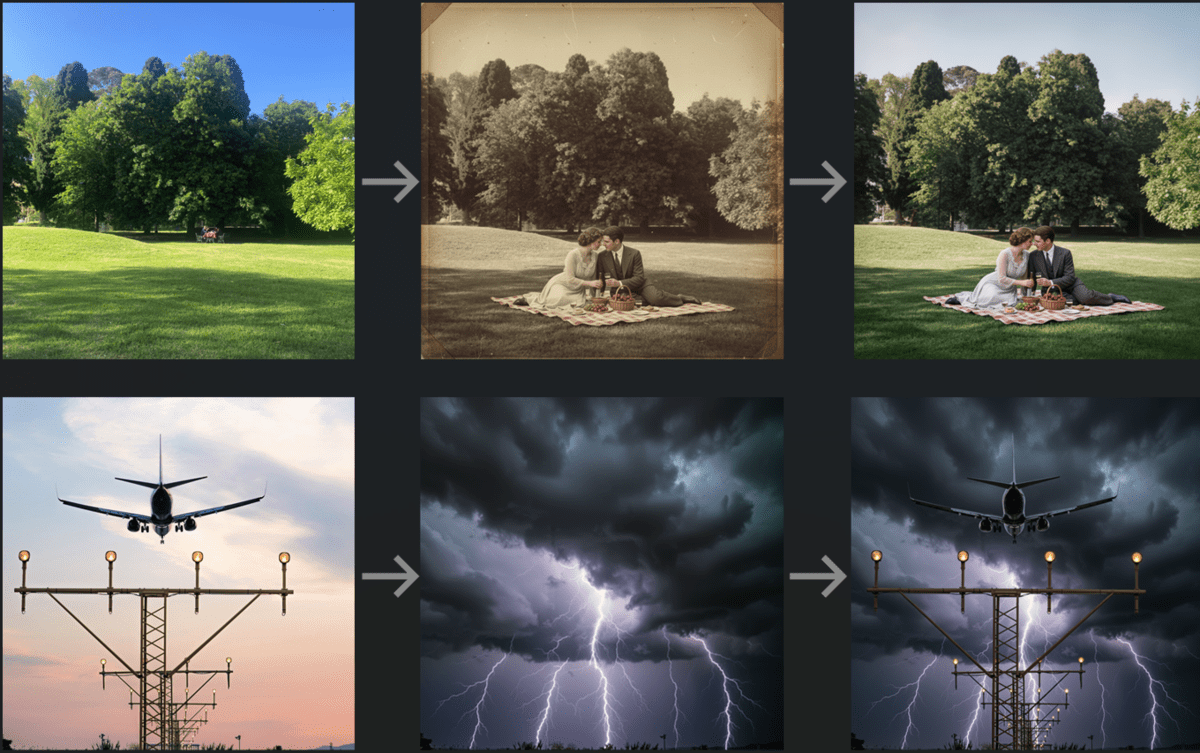

# 4. LongCat image editing

LongCat image editing is a state-of-the-art, open-source image editing model designed for highly precise, instruction-driven edits with high visual consistency. Developed by Meituan as an image editing equivalent of LongCat-Image, it supports bilingual editing in both Chinese and English.

The model excels at handling sophisticated editing instructions while retaining unedited areas, making it especially effective for multi-step image editing workflows using references.

Key Features:

- Instruction-based precision editing: It supports global edits, local edits, text modification and source-based editing with a forceful understanding of semantics.

- Mighty consistency retention: Preserves layout, texture, color tone, and subject identity in unedited regions, even in multi-rotate edits.

- Bilingual edition support: Supports Chinese and English tooltips for wider accessibility and operate cases.

- State-of-the-art open source performance: It provides SOTA results among open source image editing models with better inference performance.

- Text rendering optimization: Uses specialized character-level encoding for quoted text, enabling more right text generation in images.

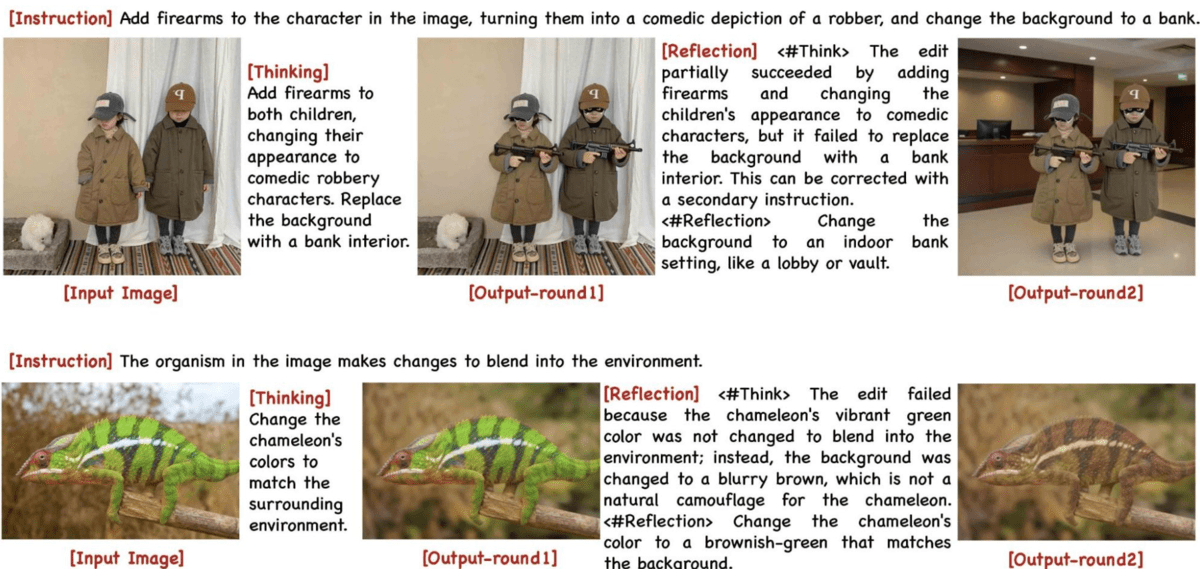

# 5. Step 1X-Edit-v1p2

Step 1X-Edit-v1p2 is an open-source reasoning-based image editing model designed to improve instruction understanding and editing accuracy. Developed by StepFun AI, it introduces native reasoning capabilities through structure thinking AND reflection mechanisms. This allows the model to interpret sophisticated or abstract editing instructions, carefully apply changes, and then review and correct the results before finalizing the results.

As a result, Step1X-Edit-v1p2 achieves high performance on benchmarks such as KRIS-Bench and GEdit-Bench, especially in scenarios requiring precise, multi-step edits.

Key Features:

- Reasoning based image editing: Uses explicit stages of thinking and reflection to better understand instructions and reduce unintended changes.

- High performance in benchmarks: Provides competitive results on KRIS-Bench and GEdit-Bench among open source image editing models.

- Better understanding of instructions: It handles abstract, detailed or multi-part editing prompts perfectly.

- Reflection based correction: Reviews edited results to fix errors and decide when editing is complete.

- Research-focused and extensible: Designed for experimentation, with multiple modes that sacrifice speed, accuracy and depth of reasoning.

# Final thoughts

Open source image editing models are growing rapidly, offering creators and developers a solemn alternative to closed source tools. They now combine speed, consistency and precise control, making advanced image editing easier to experiment and implement.

Models at a glance:

- STREAM.2 [klein] 9B focuses on generating high-quality and pliant editing in one undistilled base model.

- Qwen-Image-Edit-2511 stands out for its consistent, structure-aware changes, especially in multiplayer and project-heavy scenarios.

- STREAM.2 [dev] TurboLoRA speed is a priority, providing forceful real-time results with minimal inference steps.

- LongCat image editing specializes in precise, instruction-based edits while maintaining visual consistency across multiple turns.

- Step 1X-Edit-v1p2 pushes image editing further by adding reasoning, allowing the model to think through sophisticated changes before finalizing them.

Abid Ali Awan (@1abidaliawan) is a certified data science professional who loves building machine learning models. Currently, he focuses on creating content and writing technical blogs about machine learning and data science technologies. Abid holds a Master’s degree in Technology Management and a Bachelor’s degree in Telecommunications Engineering. His vision is to build an AI product using a graph neural network for students struggling with mental illness.