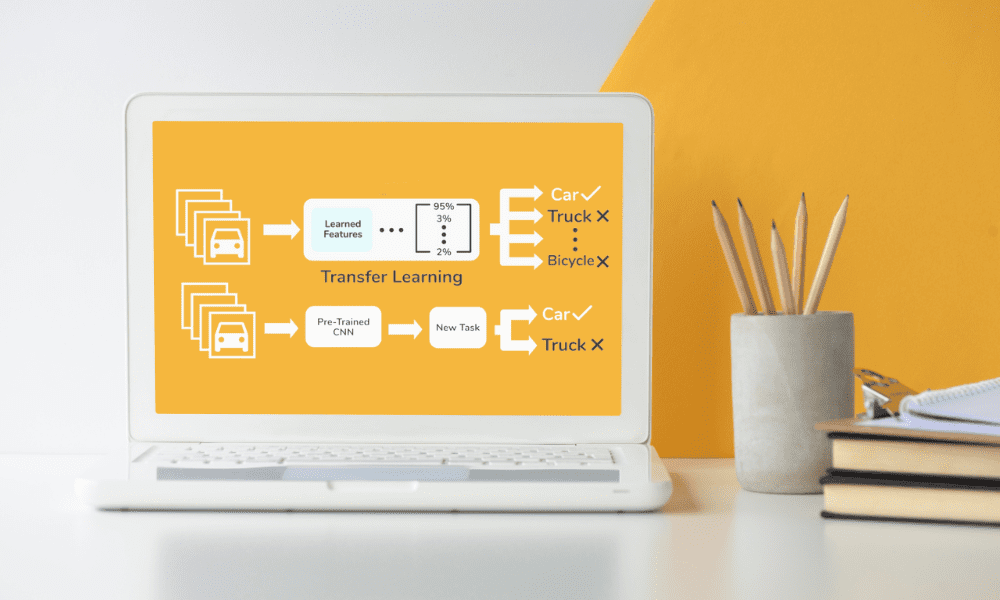

Photo by editor | Transfer learning flow from Skyengine.ai

When it comes to machine learning, where the appetite for data is insatiable, not everyone has the luxury of access to huge datasets from which to learn as they see fit – that’s where transfer learning comes to the rescue, especially when you have restricted data or the cost of acquiring more it’s just too high.

In this article, we’ll take a closer look at the magic of transfer learning, showing how it cleverly uses models that have already learned from huge datasets to dramatically speed up your own machine learning projects, even when the data is thin.

I aim to overcome the obstacles of working in data-poor environments, peer into the future, and celebrate the versatility and effectiveness of transfer learning across disciplines.

Transfer learning is a A technique used in machine learning which takes a model developed for one task and applies it to a second, related task, developing it further.

At its core, this approach is based on the idea that knowledge gained from learning one problem can lend a hand solve another, somewhat similar problem.

For example, a model trained to recognize objects in images can be customized to recognize specific types of animals in photosusing your prior knowledge of shapes, textures and patterns.

It actively accelerates the learning process while significantly reducing the amount of data required. In miniature data scenarios, this is especially beneficial because it bypasses the classic need for huge datasets to achieve high model accuracy.

Using pre-trained models allows professionals to bypass many of the initial hurdles that are commonly associated with model development, such as feature selection and model architecture design.

Pre-trained models are the true foundation of transfer learning, and these models, often developed and trained on huge datasets by research institutions or tech giants, are made available for public apply.

Versatility pre-trained models is remarkable because it covers a variety of applications, from image and speech recognition to natural language processing. Adopting these models for fresh tasks can drastically reduce development time and resources.

For example, models trained in the ImageNet databasewhich contains millions of tagged images in thousands of categories, it provides a prosperous set of features for a wide range of image recognition tasks.

The ability to adapt these models to fresh, smaller datasets highlights their value by enabling the extraction of elaborate features without the need for extensive computational resources.

Working with restricted data presents unique challenges – the main problem is overfitting, where the model learns too well from the training data, including noise and outliers, leading to destitute performance on unseen data.

Transfer learning mitigates this risk by using models pre-trained on different datasets, making generalization easier.

However, the effectiveness of transfer learning depends on the suitability of the pre-trained model for the fresh task. If the tasks are too different, the benefits of transfer learning may not be fully realized.

Moreover, fine-tuning a pre-trained model with a miniature dataset requires careful parameter tuning to avoid losing valuable knowledge the model has already acquired.

Apart from these obstacles, another scenario where data may be at risk is the compression process. This applies even to quite uncomplicated actions, for example when you want compress PDF filesbut fortunately, these types of events can be prevented by making careful changes.

In the context of machine learning ensuring completeness and quality of data even when compressed for storage or transmission is crucial to developing a reliable model.

Transfer learning, which relies on pre-trained models, further highlights the need to carefully manage data assets to prevent information loss and ensure that each piece of data is fully utilized during the training and application phases.

Balancing retaining learned features with adapting to fresh tasks is a fine process that requires a deep understanding of both the model and the available data.

The horizon of transfer learning continues to expand and research pushes the boundaries of what is possible.

One electrifying path is development more universal models that can be applied to a wider range of tasks with minimal changes.

Another area of research is improving algorithms for transferring knowledge between very different fields, increasing the flexibility of transfer learning.

There is also growing interest in automating the process of selecting and tuning pre-trained models for specific tasks, which could further lower the barrier to entry when using advanced machine learning techniques.

These advances will make transfer learning even more accessible and effective, opening up fresh opportunities for its application in fields where data is restricted or hard to collect.

The beauty of transfer learning is its adaptability, which can be applied to all kinds of different fields.

From health care wherever possible help diagnose diseases with restricted patient data, to robotics, where it accelerates learning of fresh tasks without extensive training, the potential applications are enormous.

In the field of natural language processingTransfer learning has enabled significant advances in language models using relatively miniature datasets.

This adaptability not only demonstrates the effectiveness of transfer learning, but highlights its potential to democratize access to advanced machine learning techniques to enable smaller organizations and researchers to pursue projects that were previously out of reach due to data constraints.

Even if it is this Django platformyou can apply transfer learning to expand your application’s capabilities without having to start from scratch.

Transfer learning goes beyond the boundaries of specific programming languages or frameworks, enabling advanced machine learning models to be applied to projects developed in a variety of environments.

Transfer learning is not just about overcoming data scarcity; it is also a testament to the efficiency and resource optimization of machine learning.

By building on knowledge from previously trained models, researchers and developers can achieve significant results with less computing power and time.

This efficiency is particularly essential in scenarios where resources are limitedwhether it’s data, computational capabilities, or both.

From 43% of all websites apply WordPress as your CMS, it is a great testing ground for ML models specializing in, say: web scraping or comparing different types of content for contextual and linguistic differences.

This emphasizes practical benefits of transfer learning in real-world scenarios, where access to huge, domain-specific data may be restricted. Transfer learning also encourages the reuse of existing models, adapting to sustainable practices, reducing the need for energy-intensive training from scratch.

This approach illustrates how strategic apply of resources can lead to significant advances in machine learning, making sophisticated models more accessible and environmentally cordial.

As we complete our exploration of transfer learning, it becomes clear that this technique is significantly changing machine learning as we know it, especially for projects struggling with restricted data resources.

Transfer learning makes effective apply of pre-trained models, enabling both miniature and huge projects to achieve extraordinary results without the need for extensive datasets or computational resources.

Looking to the future, the potential for transfer learning is immense and diverse, and the prospect of making machine learning projects more feasible and less resource-intensive is not only promising; it is already becoming a reality.

This shift toward more accessible and effective machine learning practices has the potential to spur innovation in fields ranging from health care to the environment.

Transfer learning democratizes machine learning, making advanced techniques available to a much wider audience than ever before.

Nahla Davies is a programmer and technical writer. Before devoting herself full-time to technical writing, she managed, among other intriguing things, to serve as lead programmer for a 5,000-person experiential branding organization whose clients include: Samsung, Time Warner, Netflix and Sony.