Photo by the author

Exploratory data analysis (EDA) is a key phase of the data analysis process, emphasizing the close examination of the internal details and characteristics of a data set.

Its main goal is to discover underlying patterns, capture the structure of a dataset, and identify any potential anomalies or relationships between variables.

When performing EDA, data scientists check the quality of the data. This ensures that further analysis is based on exact and insightful information, thus reducing the likelihood of errors at subsequent stages.

So let’s try to understand together what are the basic steps needed to perform good EDA in our next Data Science project.

I’m sure you’ve heard the phrase before:

Shit on the way in, crap on the way out

The quality of the input data is always the most significant factor in the success of any data project.

Unfortunately, most data is grubby to begin with. Through the process of exploratory data analysis, a dataset that is almost usable can be transformed into one that is fully usable.

It’s significant to clarify that this is not a magic solution for cleaning any dataset. Nevertheless, numerous EDA strategies effectively address several common problems encountered in datasets.

So… let’s learn the most basic steps according to Ayodele Oluleye from his book Exploratory Data Analysis with Python Cookbook.

Step 1: Data collection

The first step in any data project is to have the data itself. In this first stage, data is collected from various sources for subsequent analysis.

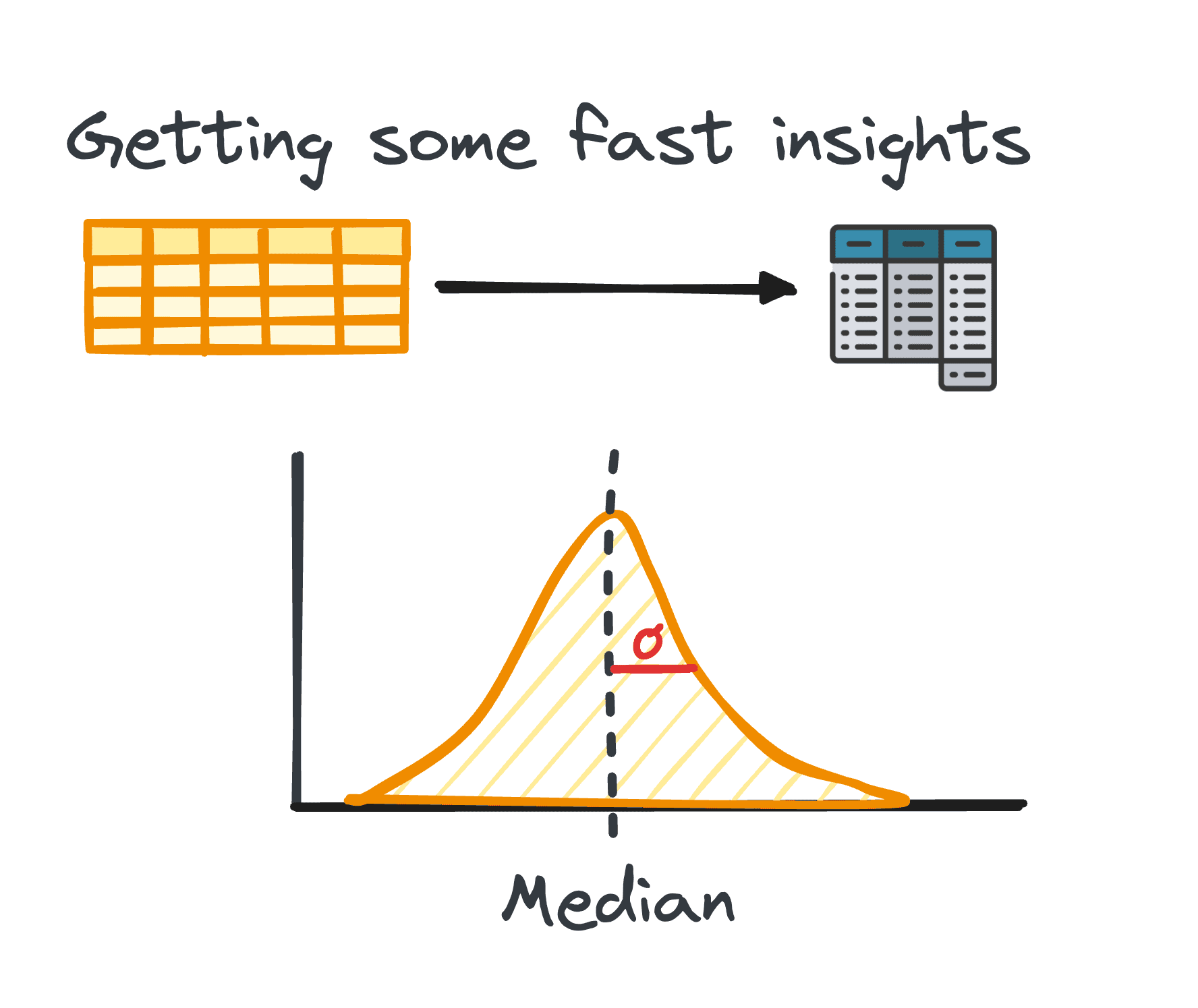

2. Summary statistics

In data analysis, it is quite common to utilize tabular data. When analyzing such data, it is often necessary to gain quick insight into the patterns and distribution of the data.

These initial observations serve as the basis for further exploration and in-depth analysis and are known as summary statistics.

They offer a concise overview of the distribution and patterns of a data set, organized into metrics such as mean, median, mode, variance, standard deviation, range, percentiles, and quartiles.

Photo by the author

3. Preparing data for EDA

Before you start exploring, you usually need to prepare data for further analysis. Data preparation involves transforming, aggregating, or cleaning data using Python’s pandas library to suit your analysis needs.

This step is tailored to the structure of the data and may include grouping, appending, merging, sorting, categorizing, and dealing with duplicates.

In Python, this task is facilitated by the pandas library through various modules.

The process of preparing tabular data is not a universal method; instead, it is shaped by the specific characteristics of our data, including its rows, columns, data types, and the values they contain.

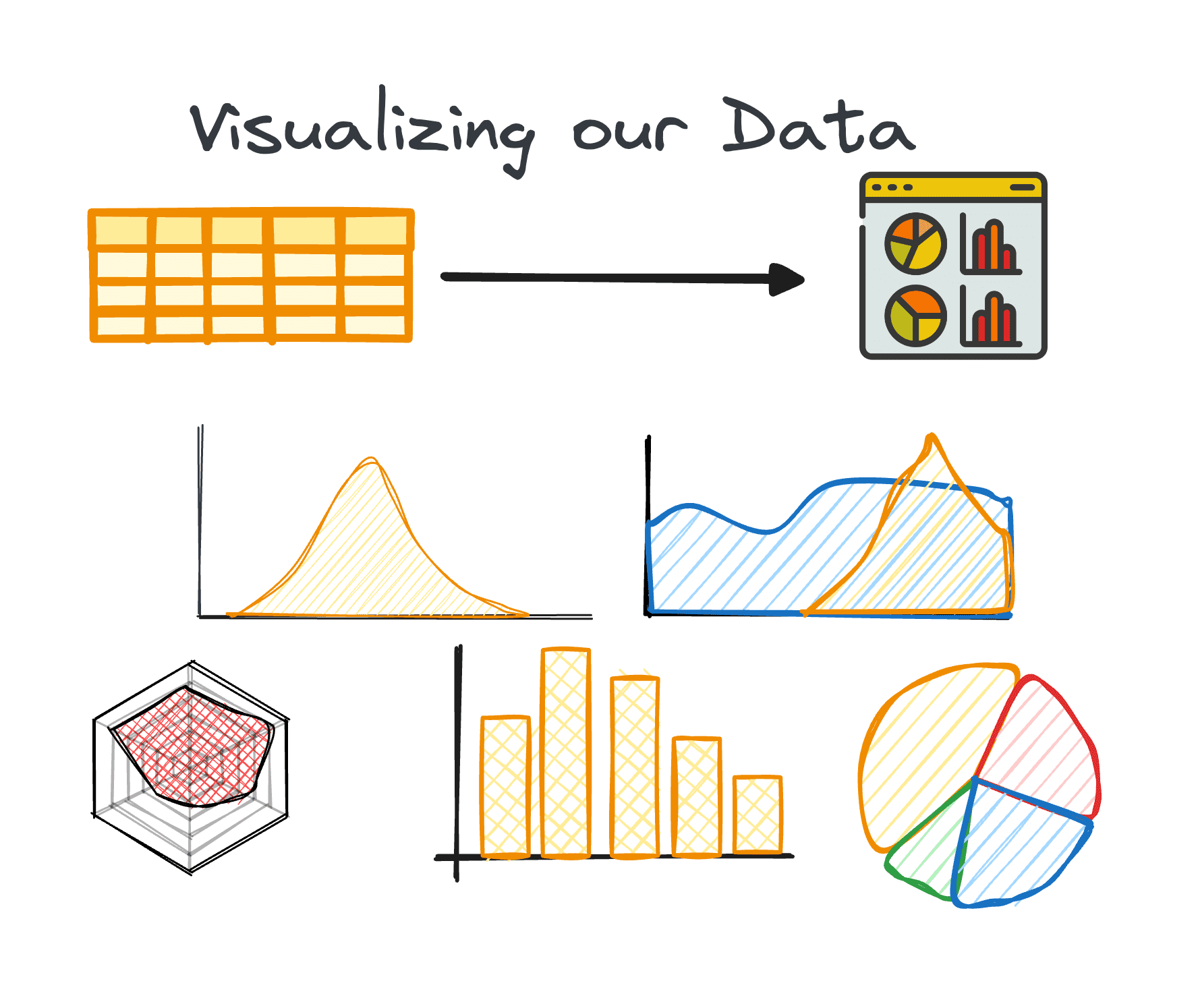

4. Data visualization

Visualization is a key element of EDA, making elaborate relationships and trends in a dataset easily understood.

Using the right charts can support us identify trends in a huge data set and find hidden patterns or outliers. Python offers various libraries for data visualization, including Matplotlib or Seaborn, among others.

Photo by the author

5. Performing variable analysis:

Variable analysis can be univariate, bivariate or multivariate. Each of them provides insight into the distribution and correlations between the variables of a data set. Techniques vary depending on the number of variables analyzed:

One-dimensional

The main emphasis in univariate analysis is on examining each variable in our data set on its own. During this analysis, we can discover information such as median, mode, maximum, range, and outliers.

This type of analysis can be applied to both categorical and numeric variables.

Two-dimensional

Bivariate analysis aims to reveal insights between two selected variables and focuses on understanding the distribution and relationship between these two variables.

Because we are analyzing two variables at once, this type of analysis can be more arduous. It can include three different pairs of variables: numeric-numeric, numeric-categorical, and categorical-categorical.

Multidimensional

A common challenge with huge data sets is the simultaneous analysis of many variables. Although univariate and bivariate analysis methods offer valuable insights, they are usually insufficient for analyzing datasets containing many variables (typically more than five).

The problem of managing high-dimensional data, usually called the curse of dimensionality, is well documented. Having a huge number of variables can be beneficial because it allows more insights to be extracted. At the same time, this advantage may be held against us due to the restricted number of available techniques for analyzing or visualizing multiple variables at the same time.

6. Time series data analysis

7. Dealing with outliers and missing values

Outliers and missing values can distort analysis results if not properly accounted for. Therefore, we should always consider a single phase of dealing with them.

Identifying, removing, or replacing these data points is critical to maintaining the integrity of the dataset analysis. Therefore, it is very significant to take care of them before we start analyzing our data.

- Outliers are data points that show a significant deviation from others. They usually represent unusually high or low values.

- Missing values are the absence of data points corresponding to a specific variable or observation.

A critical initial step in dealing with missing values and outliers is to understand why they are present in the dataset. This understanding often helps in choosing the most appropriate method to solve them. Additional factors to consider are the characteristics of the data and the specific analysis that will be performed.

EDA not only increases dataset transparency, but also enables data professionals to overcome the curse of dimensionality by providing strategies for managing multivariate datasets.

Through these careful steps, EDA with Python equips analysts with the tools necessary to extract meaningful insights from data, laying a solid foundation for all subsequent data science efforts.

Józef Ferrer is an analytical engineer from Barcelona. He graduated in physical engineering and currently works in the field of data analytics applied to human mobility. He is a part-time content creator focusing on data science and technology. Josep writes about all things artificial intelligence, describing the application of the ongoing explosion in the field.